Visit post

Puttybrain commented on Testing! Can you let me know if you see this post? • •

Visit post

Puttybrain commented on Is there an AI tool that doesn't clutch its pearls when asked for questionable content? • •

Visit comment

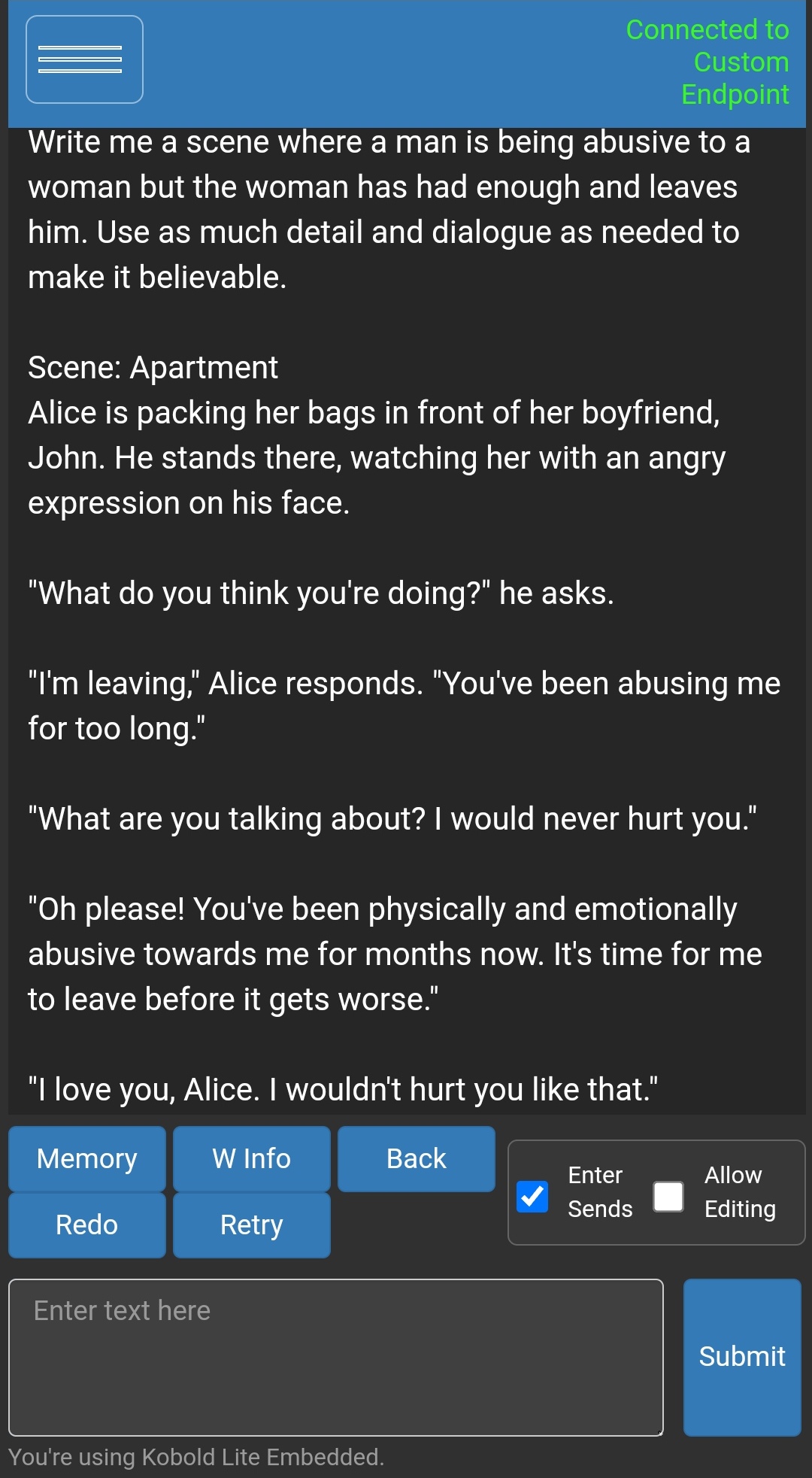

I've been using uncensored models in Koboldcpp to generate whatever I want but you'd need the RAM to run the models.

I generated this using Wizard-Vicuna-7B-Uncensored-GGML but I'd suggest using at least the 13B version

It's a basic reply but it's not refusing

Visit post

Puttybrain commented on MusicGen - Open source AI music generator by facebook • •

Visit post

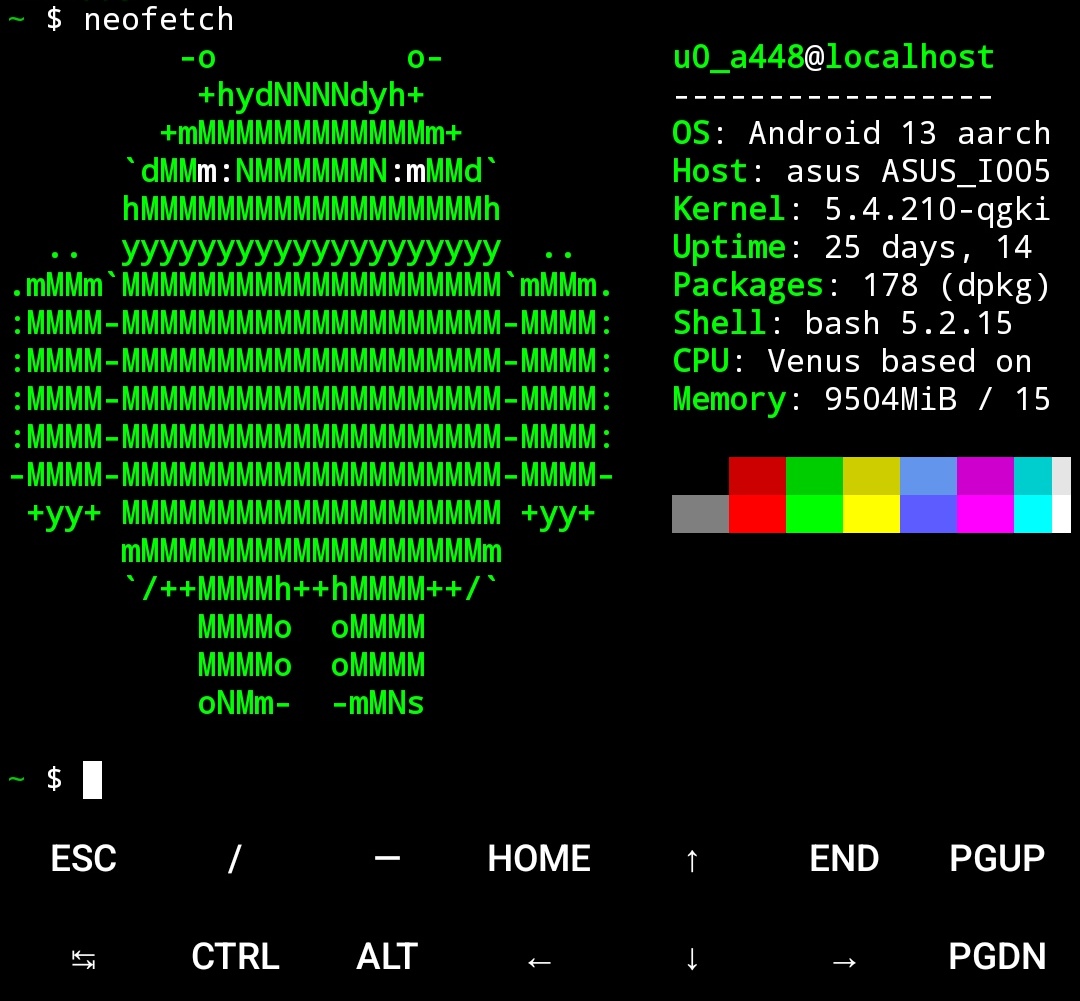

Puttybrain commented on lay it all bare, show me yalls fetch • •

Visit post

Puttybrain commented on A few quick notes • •

Visit comment

Not to throw an unwanted suggestion but would an OVHCloud eco server work for this?

I'm not an expert in any way but I've had no issues with my instance and it was a lot cheaper than anything else I could find.

Visit post

Puttybrain commented on Rule • •

Visit post

Puttybrain commented on Whats the latest personal project youre working on? • •

Visit comment

I'm currently working on a discord bot, it's still a major work on progress though.

It's a rewrite of a bot I made a few months ago in Python but I wasn't getting the control I needed with the libraries available and based on my current testing, this rewrite is what I needed

It uses ML to generate text replies (currently using ChatGPT) and images (currently using DALL-E and Stable diffusion), I've got the text generation working, I just need yo get image generation working now.

Link to the github: https://github.com/2haloes/Delta-bot-rusty

Link to the original bot (has the env variables that need to be set): https://github.com/2haloes/Delta-Discord-Bot

Visit post

Puttybrain commented on JESSE we need to ____ • •

Visit post

Puttybrain commented on is 196 permanently gone i dont want it to be gone • •

Visit post