My personal collection of interesting models I've quantized from the past week (yes, just week)

My personal collection of interesting models I've quantized from the past week (yes, just week)

Open link in next tab

https://twitter.com/bartowski1182/status/1763286093334548677

itsme2417/PolyMind: A multimodal, function calling powered LLM webui.

itsme2417/PolyMind: A multimodal, function calling powered LLM webui.

Open link in next tab

GitHub - itsme2417/PolyMind: A multimodal, function calling powered LLM webui.

https://github.com/itsme2417/PolyMind

A multimodal, function calling powered LLM webui. - GitHub - itsme2417/PolyMind: A multimodal, function calling powered LLM webui.

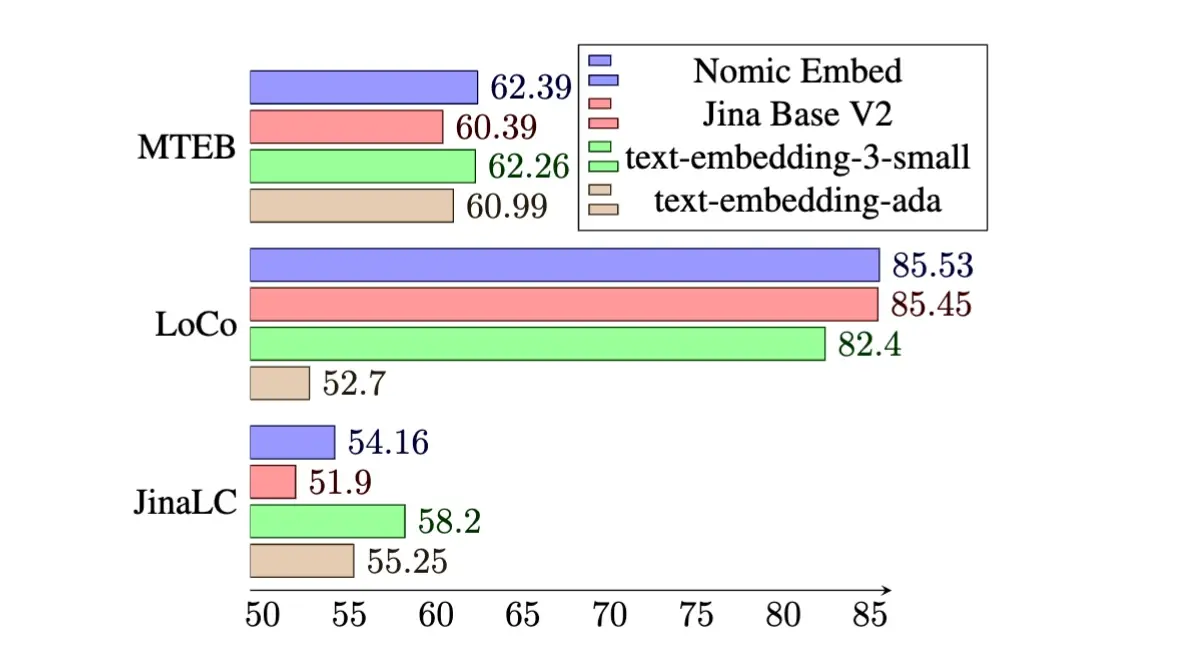

Introducing Nomic Embed: A Truly Open Embedding Model

Introducing Nomic Embed: A Truly Open Embedding Model

Open link in next tab

Introducing Nomic Embed: A Truly Open Embedding Model

https://blog.nomic.ai/posts/nomic-embed-text-v1

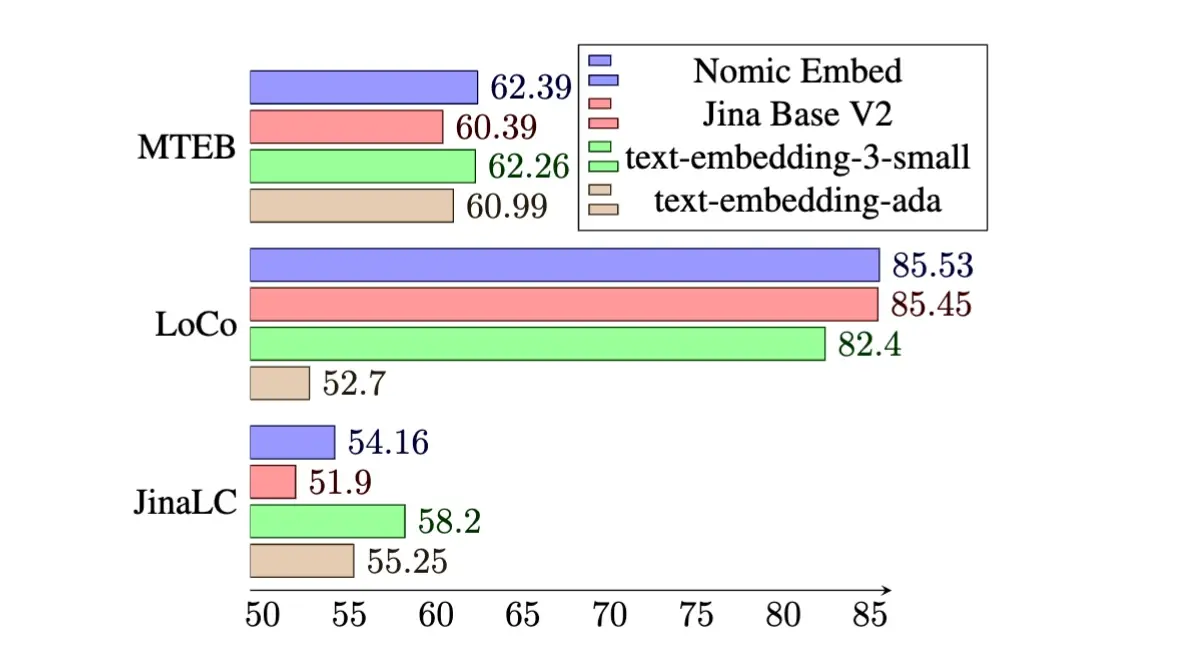

Nomic releases a 8192 Sequence Length Text Embedder that outperforms OpenAI text-embedding-ada-002 and text-embedding-v3-small.

InternLM2 models llama-fied

InternLM2 models llama-fied

Thanks to Charles for the conversion scripts, I've converted several of the new internLM2 models into Llama format. I've also made them into ExLlamaV2 while I was at it.

You can find them here:

https://huggingface.co/bartowski?search_models=internlm2

Note, the chat models seem to do something odd without outputting [UNUSED_TOKEN_145] in a way that seems equivalent to <|im_end|>, not sure why, but it works fine despite outputting that at the end.

WizardLM/WizardCoder-33B-V1.1 released!

WizardLM/WizardCoder-33B-V1.1 released!

Open link in next tab

WizardLM/WizardCoder-33B-V1.1 · Hugging Face

https://huggingface.co/WizardLM/WizardCoder-33B-V1.1

We’re on a journey to advance and democratize artificial intelligence through open source and open science.

Microsoft announces WaveCoder

Microsoft announces WaveCoder

Open link in next tab

https://twitter.com/_akhaliq/status/1739486811100004513?t=3dcn2vphG5G-1boaLBQH6w

Mixture of Experts Explained (Huggingface blog)

Mixture of Experts Explained (Huggingface blog)

Open link in next tab

Mixture of Experts Explained

https://huggingface.co/blog/moe

We’re on a journey to advance and democratize artificial intelligence through open source and open science.

Mistral releases version 0.2 of their 7B model

Mistral releases version 0.2 of their 7B model

Open link in next tab

La plateforme

https://mistral.ai/news/la-plateforme/

Our first AI endpoints are available in early access.

Mistral drops a new magnet download

Mistral drops a new magnet download

Open link in next tab

https://twitter.com/MistralAI/status/1733150512395038967?t=1qjjZauoJSPikKFkNto2kg&s=19

Orca 2: Teaching Small Language Models How to Reason

Orca 2: Teaching Small Language Models How to Reason

Open link in next tab

Orca 2: Teaching Small Language Models How to Reason

https://www.microsoft.com/en-us/research/blog/orca-2-teaching-small-language-models-how-to-reason/

At Microsoft, we’re expanding AI capabilities by training small language models to achieve the kind of enhanced reasoning and comprehension typically found only in much larger models.