Best Guest VM Filesystem for NTFS Host

Best Guest VM Filesystem for NTFS Host

I am setting up a Linux server (probably will be NixOS) where my VM disk files will be stored on top of an NTFS partition. (Yes I know NTFS sucks but it has to be this way.)

I am asking which guest filesystem will have the best performance for a very mixed workload. If I had access to the extra features of BTRFS or ZFS I would use them but I have no idea how CoW interacts with NTFS; that is why I am asking here.

Also I would like some NTFS performance tuning pointers.

Setting up a Git Repo Which Includes Inconsistently Mounted Directory/File

Setting up a Git Repo Which Includes Inconsistently Mounted Directory/File

This is more of a system config question than a programming one, but I think this community is the best one to ask about anything Git-related.

Anyway, I am setting up a new project with hardware that has 2 physical drives. The "main" drive will usually be mounted and have 10-20 config files on it, maybe 50-100 LOC each. The "secondary" drive will be mounted only occasionally, and will have 1 small config file on it, literally 2 or 3 LOC. When mounted, this file will be located in a specific directory close to the other config files.

I would like to manage all of these files using git, ideally with a single repo, as they are all part of the same project. However, as the second drive (and thus the config file on it) will sporadically appear and disappear, Git will be confused and constantly log me adding and deleting the file.

Right now I think the most realistic solution is to make a repo for each drive and make the secondary drive a submodule of the main. But I feel like it is awkward to make a whole repo for such a simple file.

What would you do in this situation, and what is best practice? Is there a way to make this one repo?

USB Drive with Write-Protect Switch Recommendations

USB Drive with Write-Protect Switch Recommendations

I am looking for a fast USB drive which has a physical write-protect enable switch on it. I would also want a BadUSB-resistant USB controller. I want this for 2 reasons:

-

So I can diagnose issues on machines where the problem may or may not be malware. This way, I can plug it into several machines without risking spreading malware.

-

So I can carry around a TailsOS drive wherever I go, and use it on public computers and friend's computers without risk of infection.

So far, I have only found one company making these things, Kanguru. There are almost no reviews of their products by reputable sources, at least not for their write-protecting drives.

Their BadUSB firmware detection module is NIST certified, which is great (given that you trust proprietary cryptography modules at all), but no certs for the main storage write protection. Also Kanguru products are very overpriced.

And no I am not using SD cards, their write protect implementation is software-based and they are too slow for me.

I am specifically looking at the Kanguru FlashTrust . My questions are:

-

Has anyone used Kanguru products and how was your experience?

-

Are there other companies that make decent quality drives with hardware write-protect switches? (Ideally ones that have FOSS firmware and are third-party tested, but I will take anything).

-

Are there any companies that make USB writeblockers which are small enough to fit in a wallet and <$50? (Example of one that is too big). That way I can use a standard, cheaper USB drive.

Oh how I wish Nitrokey made these!

Config Structure Conventions?

Config Structure Conventions?

I am just setting up my NixOS config for the first time, and I know that it will be fairly complex. I know it will only be possible and scalable if I have sane conventions.

I have read a number of example configs, but there does not seem to be consistent conventions between them of where to store custom option declarations, how to handle enabling/disabling modules, etc. They all work, but they do it in different ways.

Are there any official or unofficial conventions/style guides to NixOS config structure, and where can I find them?

For example, should I make a lib directory where I put modules that are easily portable and reusable in other people's configs? When should I break modules up into smaller ones? Etc. These are things that I hope to be addressed.

Good Practice or Not - Adding Unique Identifier to Custom Options?

Good Practice or Not - Adding Unique Identifier to Custom Options?

I have started using NixOS recently and I am just now creating conventions to use in my config.

One big choice I need to make is whether to include a unique identifier as the most significant attribute in any options that I define for my system.

For example:

Lets say I am setting up my desktop so that I am easily able to switch between light and dark modes system-wide. Therefore, I create the boolean option:

visuals.useDarkMode

Lets say I also want to toggle on/off Tor and other privacy technologies all at once easily, so I create the boolean:

usePrivateMode

Although these options do not do related things, they are still both custom options that I have made. I have the first instinct to somehow segregate them from the builtin NixOS options. Let's say my initials are "RK". I could make them all sub-attributes of the "RK" attribute.

rk.visuals.useDarkMode

rk.usePrivateMode

I feel like this is either a really good idea or an antipattern. I would like your opinions on what you think of it and why.

Good Practice or Not - Add Wrapper for Custom Shell Aliases?

My question is whether it is good practice to include a unique wrapper phrase for custom commands and aliases.

For example, lets say I use the following command frequently:

apt update && apt upgrade -y && flatpak update

I want to save time by shortening this command. I want to alias it to the following command:

update

And lets say I also make up a command that calls a bash script to scrub all of of my zfs and btrfs pools:

scrub

Lets say I add 100 other aliases. Maybe I am overthinking it, but I feel there should be some easy way to distinguish these from native Unix commands. I feel there should be some abstraction layer.

My question is whether converting these commands into arguments behind a wrapper command is worth it.

For example, lets say my initials are "RK". The above commands would become:

rk update

rk scrub

Then I could even create the following to list all of my subcommands and their uses:

rk --help

I would have no custom commands that exist outside of rk, so I add to total of one executable to my system.

I feel like this is the "cleaner" approach, but what do you think? Is this an antipattern? Is is just extra work?

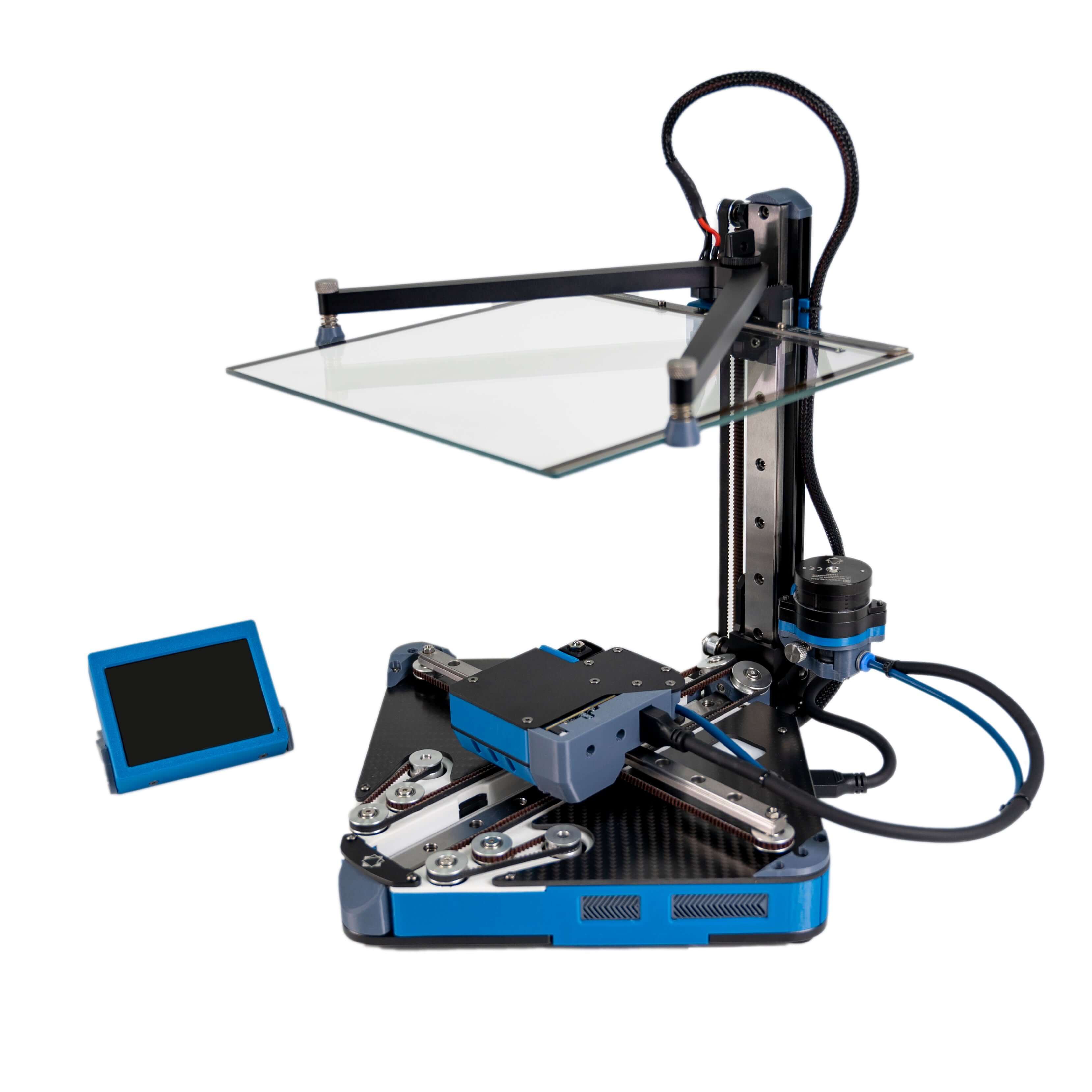

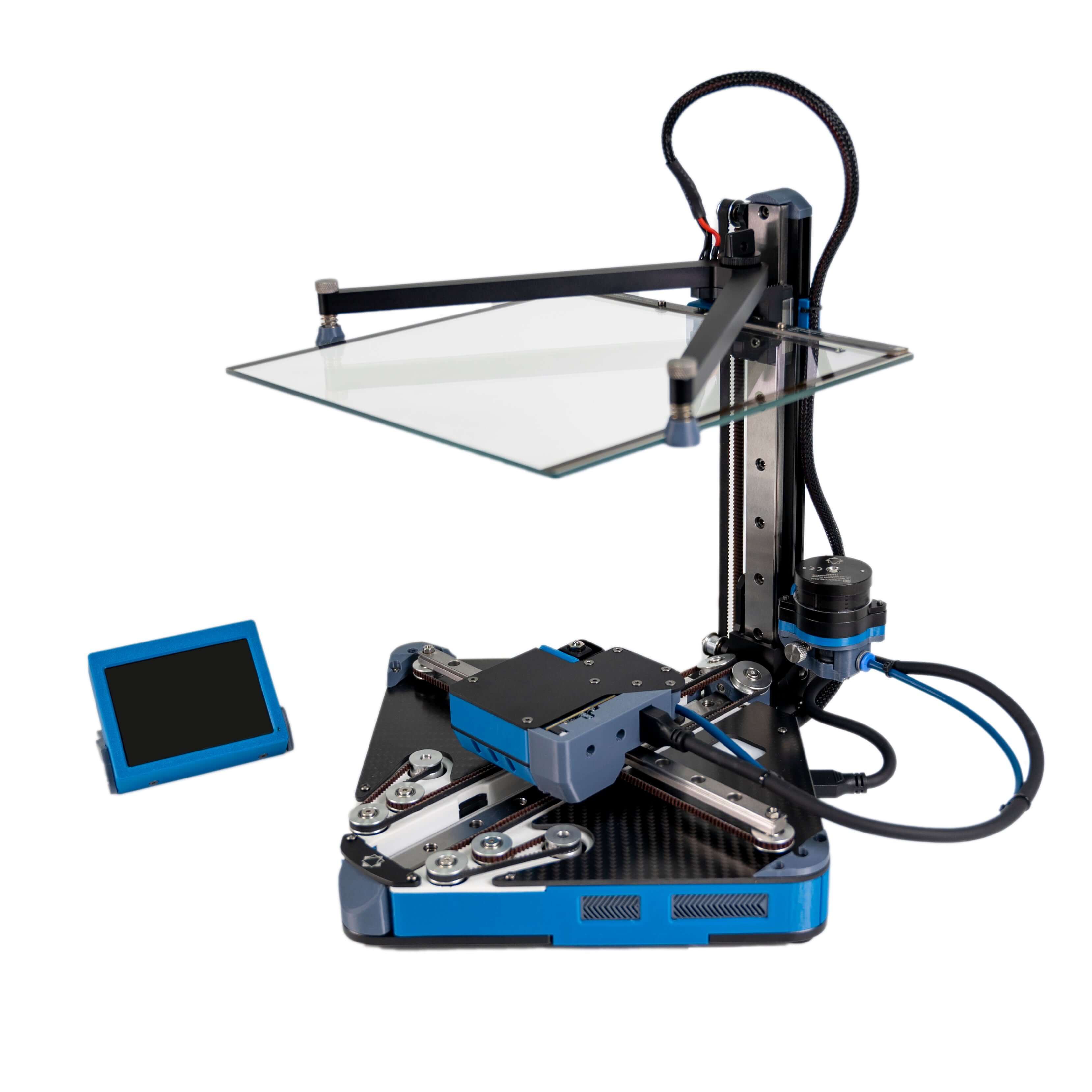

Positron V3.2 as First Printer?

Positron V3.2 as First Printer?

Open link in next tab

Positron V3.2 3D Printer Kit by LDO

https://www.fabreeko.com/products/positron-v3-2-3d-printer-kit-by-ldo?variant=45406118576383

Positron V3.2 foldable 3D printer designed to fit inside a standard filament box. Kit comes ready to assemble and includes a hard Pelican plastic travel case.

Can a System Handle Brown/Blackouts on only the GPU?

Can a System Handle Brown/Blackouts on only the GPU?

I am planning to build a multipurpose home server. It will be a NAS, virtualization host, and have the typical selfhosted services. I want all of these services to have high uptime and be protected from power surges/balckouts, so I will put my server on a UPS.

I also want to run an LLM server on this machine, so I plan to add one or more GPUs and pass them through to a VM. I do not care about high uptime on the LLM server. However, this of course means that I will need a more powerful UPS, which I do not have the space for.

My plan is to get a second power supply to power only the GPUs. I do not want to put this PSU on the UPS. I will turn on the second PSU via an Add2PSU.

In the event of a blackout, this means that the base system will get full power and the GPUs will get power via the PCIe slot, but they will lose the power from the dedicated power plug.

Obviously this will slow down or kill the LLM server, but will this have an effect on the rest of the system?

Load Balancing Between Circuits

Load Balancing Between Circuits

I am not an electrician, but an end user.

I am planning to build a very powerful server for running LLMs. It will have many GPUs and can realistically hit a 1500 watt sustained load. The PSU in my computer can handle 240v but I do not have access to a 240v circuit.

My question is whether it is a good idea to somehow balance the load between 2 or 3 120v circuits. If so, what are some methods to safely do this?

Is RAID1 over USB Reliable?

Is RAID1 over USB Reliable?

I have an 11th gen Framework mainboard which I would like to repurpose as a server. Unfortunately, (unless I do some super janky stuff) I can only connect 1 drive to it over M.2 and any additional ones must be over USB.

I am thinking of just using some portable hard drives and plugging them in over USB. I plan to RAID1 them and use them as boot drives and data storage, and use the M.2 slot for something unrelated.

In your experiences, is USB reliable enough nowadays to run a RAID array for a server like this? If it is, does it depend on the specific drive used?