How do you guys handle reverse proxies in rootless containers?

How do you guys handle reverse proxies in rootless containers?

I've been trying to migrate my services over to rootless Podman containers for a while now and I keep running into weird issues that always make me go back to rootful. This past weekend I almost had it all working until I realized that my reverse proxy (Nginx Proxy Manager) wasn't passing the real source IP of client requests down to my other containers. This meant that all my containers were seeing requests coming solely from the IP address of the reverse proxy container, which breaks things like Nextcloud brute force protection, etc. It's apparently due to this Podman bug: https://github.com/containers/podman/issues/8193

This is the last step before I can finally switch to rootless, so it makes me wonder what all you self-hosters out there are doing with your rootless setups. I can't be the only one running into this issue right?

If anyone's curious, my setup consists of several docker-compose files, each handling a different service. Each service has its own dedicated Podman network, but only the proxy container connects to all of them to serve outside requests. This way each service is separated from each other and the only ingress from the outside is via the proxy container. I can also easily have duplicate instances of the same service without having to worry about port collisions, etc. Not being able to see real client IP really sucks in this situation.

Anyone else unable to log out of Plasma 6?

Anyone else unable to log out of Plasma 6?

On one of my machines, I am completely unable to log out. The behavior is slightly different depending on whether I am in Wayland or X11.

Wayland

- Clicking log out and then OK in the log out window brings me back to the desktop.

- Doing this again does the same thing

- Clicking log out for a third time does nothing

X11

- Clicking log out will lead me to a black screen with just my mouse cursor.

In my journalctl logs, I see:

Apr 03 21:52:46 arch-nas systemd[1]: Stopping User Runtime Directory /run/user/972...

Apr 03 21:52:46 arch-nas systemd[1]: run-user-972.mount: Deactivated successfully.

Apr 03 21:52:46 arch-nas systemd[1]: user-runtime-dir@972.service: Deactivated successfully.

Apr 03 21:52:46 arch-nas systemd[1]: Stopped User Runtime Directory /run/user/972.

Apr 03 21:52:46 arch-nas systemd[1]: Removed slice User Slice of UID 972.

Apr 03 21:52:46 arch-nas systemd[1]: user-972.slice: Consumed 1.564s CPU time.

Apr 03 21:52:47 arch-nas systemd[1]: dbus-:1.2-org.kde.kded.smart@0.service: Deactivated successfully.

Apr 03 21:52:47 arch-nas systemd[1]: dbus-:1.2-org.kde.powerdevil.discretegpuhelper@0.service: Deactivated successfully.

Apr 03 21:52:47 arch-nas systemd[1]: dbus-:1.2-org.kde.powerdevil.backlighthelper@0.service: Deactivated successfully.

Apr 03 21:52:48 arch-nas systemd[1]: dbus-:1.2-org.kde.powerdevil.chargethresholdhelper@0.service: Deactivated successfully.

Apr 03 21:52:54 arch-nas systemd[4500]: Created slice Slice /app/dbus-:1.2-org.kde.LogoutPrompt.

Apr 03 21:52:54 arch-nas systemd[4500]: Started dbus-:1.2-org.kde.LogoutPrompt@0.service.

Apr 03 21:52:54 arch-nas ksmserver-logout-greeter[5553]: qt.gui.imageio: libpng warning: iCCP: known incorrect sRGB profile

Apr 03 21:52:54 arch-nas ksmserver-logout-greeter[5553]: kf.windowsystem: static bool KX11Extras::compositingActive() may only be used on X11

Apr 03 21:52:54 arch-nas plasmashell[5079]: qt.qpa.wayland: eglSwapBuffers failed with 0x300d, surface: 0x0

Apr 03 21:52:55 arch-nas systemd[4500]: Created slice Slice /app/dbus-:1.2-org.kde.Shutdown.

Apr 03 21:52:55 arch-nas systemd[4500]: Started dbus-:1.2-org.kde.Shutdown@0.service.

Apr 03 21:52:55 arch-nas systemd[4500]: Stopped target plasma-workspace-wayland.target.

Apr 03 21:52:55 arch-nas systemd[4500]: Stopped target KDE Plasma Workspace.

Apr 03 21:52:55 arch-nas systemd[4500]: Requested transaction contradicts existing jobs: Transaction for is destructive (drkonqi-coredump-pickup.service has 'start' job queued, but 'stop' is included in transaction).

Apr 03 21:52:55 arch-nas systemd[4500]: graphical-session.target: Failed to enqueue stop job, ignoring: Transaction for graphical-session.target/stop is destructive (drkonqi-coredump-pickup.service has 'start' job queued, but 'stop' is included in transaction).

Apr 03 21:52:55 arch-nas systemd[4500]: Stopped target KDE Plasma Workspace Core.

Apr 03 21:52:55 arch-nas systemd[4500]: Stopped target Startup of XDG autostart applications.

Apr 03 21:52:55 arch-nas systemd[4500]: Stopped target Session services which should run early before the graphical session is brought up.

Apr 03 21:52:55 arch-nas systemd[4500]: dbus-:1.2-org.kde.LogoutPrompt@0.service: Main process exited, code=exited, status=1/FAILURE

Apr 03 21:52:55 arch-nas systemd[4500]: dbus-:1.2-org.kde.LogoutPrompt@0.service: Failed with result 'exit-code'.graphical-session.target/stop

I've filed an upstream bug for this but I was wondering if anyone else here was also experiencing the same issue.

Nextcloud Hub 7 is here!

Nextcloud Hub 7 is here!

Open link in next tab

Nextcloud Hub 7: advanced search and global out-of-office features - Nextcloud

https://nextcloud.com/blog/nextcloud-hub-7-advanced-search-and-global-out-of-office-features/

Nextcloud functions as a single platform, bridging together all apps under one roof in synchronization. Today, we’re introducing our most integrated platform yet - Nextcloud Hub 7.

What are some KVM-over-IP or equivalent solutions you guys would recommend for guaranteed remote access and remote power cycle?

What are some KVM-over-IP or equivalent solutions you guys would recommend for guaranteed remote access and remote power cycle?

Currently, I have SSH, VNC, and Cockpit setup on my home NAS, but I have run into situations where I lose remote access because I did something stupid to the network connection or some update broke the boot process, causing it to get stuck in the BIOS or bootloader.

I am looking for a separate device that will allow me to not only access the NAS as if I had another keyboard, mouse, and monitor present, but also let's me power cycle in the case of extreme situations (hard freeze, etc.). Some googling has turned up the term KVM-over-IP, but I was wondering if any of you guys have any trustworthy recommendations.

When the DM invents an item on the spot and instantly regrets it

When the DM invents an item on the spot and instantly regrets it

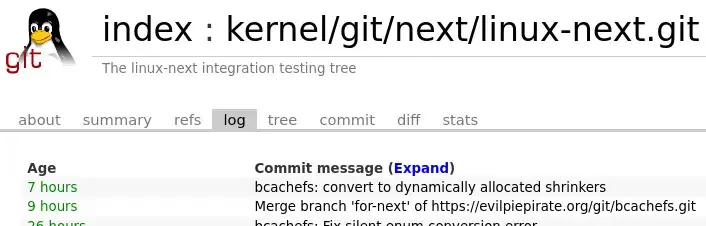

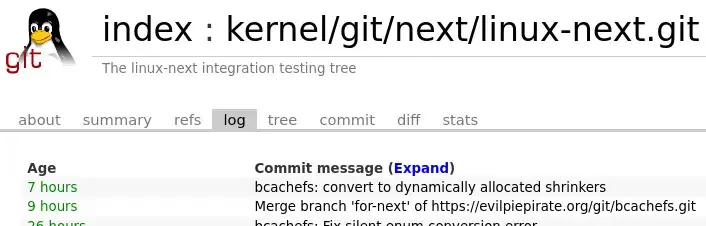

Bcachefs Merged Into Linux-Next

Bcachefs Merged Into Linux-Next

Open link in next tab

Bcachefs Merged Into Linux-Next

https://www.phoronix.com/news/Bcachefs-In-Linux-Next

Bcachefs Merged Into Linux-Next

Bcachefs Merged Into Linux-Next

Open link in next tab

Bcachefs Merged Into Linux-Next

https://www.phoronix.com/news/Bcachefs-In-Linux-Next

Anyone else seeing reduced performance in BG3 Patch 2 with the Vulkan renderer?

Anyone else seeing reduced performance in BG3 Patch 2 with the Vulkan renderer?

Patch 2 seems to have drastically slowed down the Vulkan Renderer. Before I was able to get 80-110FPS in the Druid Grove, but now I am only getting 50fps. DX11 seems fine though, but I prefer using Vulkan since I am on Linux.

Arch Linux, Kernel 6.4.12

Ryzen 3900x

Nvidia 3090 w/ 535.104.05 drivers

Latest Proton Experimental

[SOLVED] If I am using the SWAG proxy in front of a Nextcloud instance, is it safe to ignore some of the warnings in the admin page?

[SOLVED] If I am using the SWAG proxy in front of a Nextcloud instance, is it safe to ignore some of the warnings in the admin page?

I am using one of the official Nextcloud docker-compose files to setup an instance behind a SWAG reverse proxy. SWAG is handling SSL and forwarding requests to Nextcloud on port 80 over a Docker network. Whenever I go to the Overview tab in the Admin settings, I see this security warning:

The "X-Robots-Tag" HTTP header is not set to "noindex, nofollow". This is a potential security or privacy risk, as it is recommended to adjust this setting accordingly.

I have X-Robots-Tag set in SWAG. Is it safe to ignore this warning? I am assuming that Nextcloud is complaining about this because it still thinks its communicating over an insecured port 80 and not aware of the fact that its only talking via SWAG. Maybe I am wrong though. I wanted to double check and see if there was anything else I needed to do to secure my instance.

SOLVED: Turns out Nextcloud is just picky with what's in X-Robots-Tag. I had set it to SWAG's recommended setting of noindex, nofollow, nosnippet, noarchive, but Nextcloud expects noindex, nofollow.

Migrating from docker to podman, encountering some issues

Migrating from docker to podman, encountering some issues

cross-posted from: https://lemmy.world/post/3989163

I've been messing around with podman in Arch and porting my self-hosted services over to it. However, it's been finicky and I am wondering if anybody here could help me out with a few things.

- Some of my containers aren't getting properly started up by

podman-restart.serviceon system reboot. I realized they were the ones that depended on my slow external BTRFS drive. Currently its mounted withx-systemd.automount,x-systemd.device-timeout=5so that it doesn't hang up the boot if I disconnect it, but it seems like Podman doesn't like this. If I remove the systemd options the containers properly boot up automatically, but I risk boot hangs if the drive ever gets disconnected from my system. I have already triedx-systemd.before=podman-restart.serviceandx-systemd.required-by=podman-restart.service, and even tried increasing the device-timeout to no avail.When it attempts to start the container, I see this in journalctl:

Aug 27 21:15:46 arch-nas systemd[1]: external.automount: Got automount request for /external, triggered by 3130 (3) Aug 27 21:15:46 arch-nas systemd[1]: external.automount: Automount point already active? Aug 27 21:15:46 arch-nas systemd[1]: external.automount: Got automount request for /external, triggered by 3130 (3) Aug 27 21:15:46 arch-nas systemd[1]: external.automount: Automount point already active? Aug 27 21:15:46 arch-nas systemd[1]: external.automount: Got automount request for /external, triggered by 3130 (3) Aug 27 21:15:46 arch-nas systemd[1]: external.automount: Automount point already active? Aug 27 21:15:46 arch-nas systemd[1]: external.automount: Got automount request for /external, triggered by 3130 (3) Aug 27 21:15:46 arch-nas systemd[1]: external.automount: Automount point already active? Aug 27 21:15:46 arch-nas systemd[1]: external.automount: Got automount request for /external, triggered by 3130 (3) Aug 27 21:15:46 arch-nas systemd[1]: external.automount: Automount point already active? Aug 27 21:15:46 arch-nas systemd[1]: external.automount: Got automount request for /external, triggered by 3130 (3) Aug 27 21:15:46 arch-nas systemd[1]: external.automount: Automount point already active? Aug 27 21:15:46 arch-nas systemd[1]: external.automount: Got automount request for /external, triggered by 3130 (3) Aug 27 21:15:46 arch-nas systemd[1]: external.automount: Automount point already active? Aug 27 21:15:46 arch-nas systemd[1]: external.automount: Got automount request for /external, triggered by 3130 (3) Aug 27 21:15:46 arch-nas systemd[1]: external.automount: Automount point already active? Aug 27 21:15:46 arch-nas systemd[1]: libpod-742b4595dbb1ce604440d8c867e72864d5d4ce1f2517ed111fa849e59a608869.scope: Deactivated successfully. Aug 27 21:15:46 arch-nas conmon[3124]: conmon 742b4595dbb1ce604440 : runtime stderr: error stat'ing file `/external/share`: Too many levels of symbolic links Aug 27 21:15:46 arch-nas conmon[3124]: conmon 742b4595dbb1ce604440 : Failed to create container: exit status 1

- When I shutdown my system, it has to wait for 90 seconds for

libcrunandlibpod-conmon-.scopeto timeout. Any idea what's causing this? This delay gets pretty annoying especially on an Arch system since I am constantly restarting due to updates.All the containers are started using

docker-composewithpodman-dockerif that's relevant.Any help appreciated!

EDIT: So it seems like podman really doesn't like systemd automount. Switching to

nofail, x-systemd.before=podman-restart.serviceseems like a decent workaround if anyone's interested.