The Tech Baron Seeking to “Ethnically Cleanse” San Francisco

The Tech Baron Seeking to “Ethnically Cleanse” San Francisco

Open link in next tab

The Tech Baron Seeking to “Ethnically Cleanse” San Francisco

https://newrepublic.com/article/180487/balaji-srinivasan-network-state-plutocrat

If Balaji Srinivasan is any guide, then the Silicon Valley plutocrats are definitely not okay.

'It’s a common misconception that we recommend all EAs “marry to give,” or marry a high-net-worth individual with the intent of redirecting much of their wealth to effective causes'

'It’s a common misconception that we recommend all EAs “marry to give,” or marry a high-net-worth individual with the intent of redirecting much of their wealth to effective causes'

Open link in next tab

100,000 Hours Introduction

https://web.archive.org/web/20150831031818/http://worldoptimization.tumblr.com/post/126848111674/100000-hours-introduction

The average marriage lasts for 100,000 hours. If used well, you can use this time to improve the lives of hundreds of people. A lot of people will tell you simply to “marry whoever you’re passionate...

"As always, pedophilia is not the same as ephebophilia." - Eliezer Yudkowsky, actual quote

"As always, pedophilia is not the same as ephebophilia." - Eliezer Yudkowsky, actual quote

Open link in next tab

Thought experiment: The transhuman pedophile — LessWrong

https://www.lesswrong.com/posts/a8dCAtNKM8eK3WHAE/thought-experiment-the-transhuman-pedophile?commentId=YAwrAtPhEMqmmFGzz

Comment by Eliezer Yudkowsky - I may never actually use this in a story, but in another universe I had thought of having a character mention that... call it the forces of magic with normative dimension... had evaluated one pedophile who had known his desires were harmful to innocents and never acted upon them, while living a life of above-average virtue; and another pedophile who had acted on those desires, at harm to others. So the said forces of normatively dimensioned magic transformed the second pedophile's body into that of a little girl, delivered to the first pedophile along with the equivalent of an explanatory placard. Problem solved. And indeed the 'problem' as I had perceived it was, "What if a virtuous person deserving our aid wishes to retain their current sexual desires and not be frustrated thereby?" (As always, pedophilia is not the same as ephebophilia.) I also remark that the human equivalent of a utility function, not that we actually have one, often revolves around desires whose frustration produces pain. A vanilla rational agent (Bayes probabilities, expected utility max) would not see any need to change its utility function even if one of its components seemed highly probable though not absolutely certain to be eternally frustrated, since it would suffer no pain thereby.

The SSC subreddit ponders the difference between Bayesianism and plain old bias

The SSC subreddit ponders the difference between Bayesianism and plain old bias

Open link in next tab

What's the difference between a Bayesian prior and pre-existing bias?

https://old.reddit.com/r/slatestarcodex/comments/18i7l89/whats_the_difference_between_a_bayesian_prior_and/

What's the difference between a Bayesian prior and pre-existing bias?

"Successful people create companies. More successful people create countries. The most successful people create religions."

"Successful people create companies. More successful people create countries. The most successful people create religions."

Open link in next tab

Successful people

https://blog.samaltman.com/successful-people

"Successful people create companies. More successful people create countries. The most successful people create religions." I heard this from Qi Lu; I'm not sure what the source is. It got me...

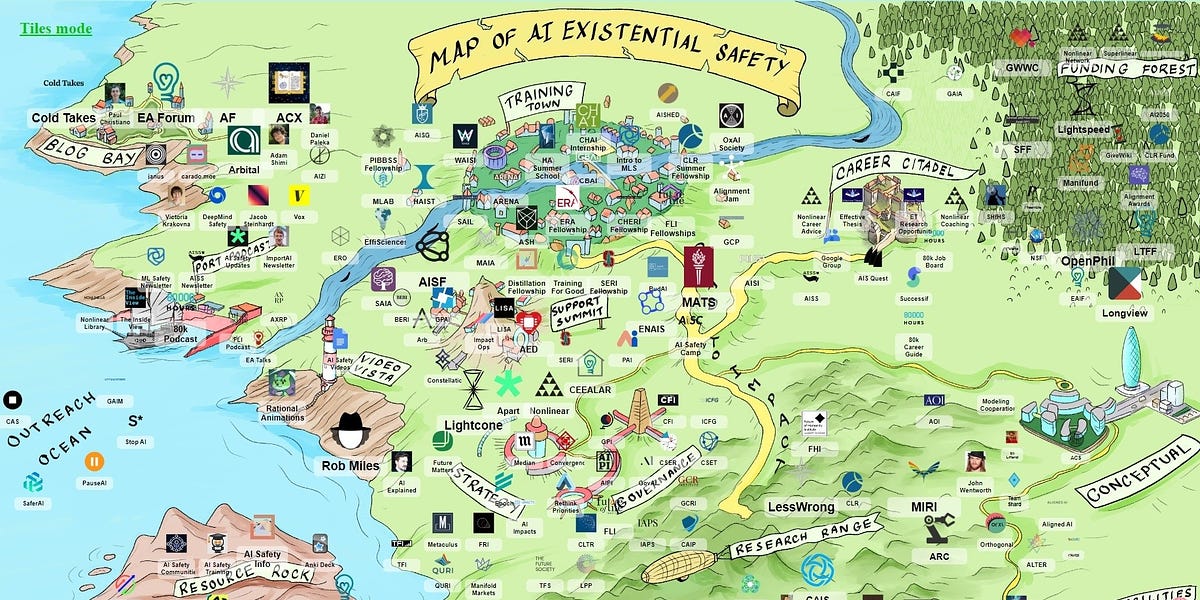

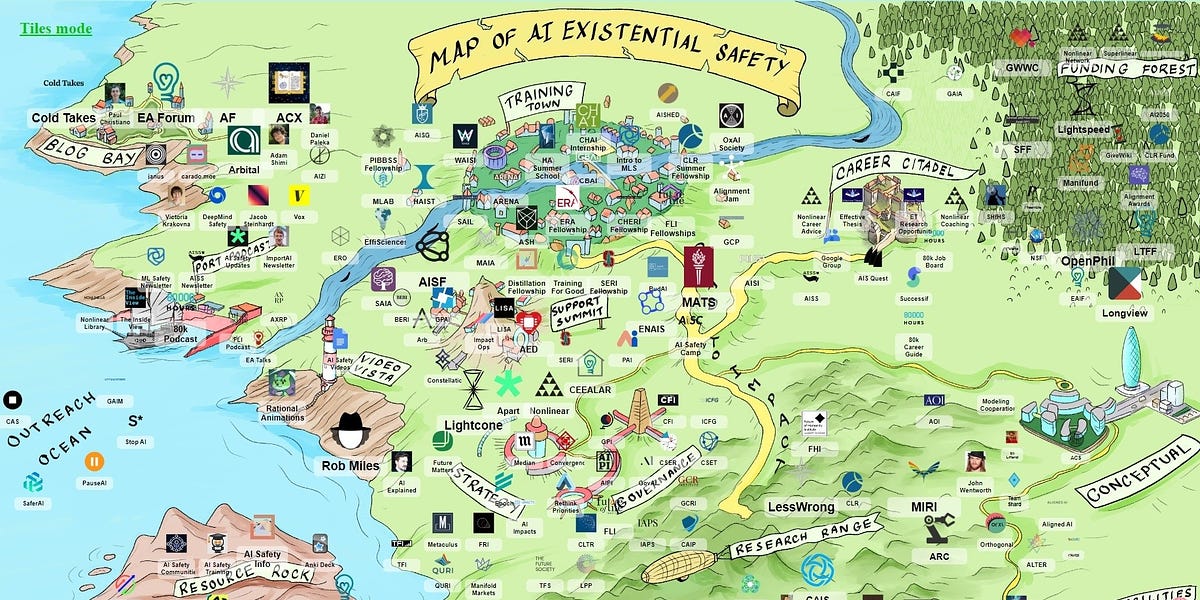

Effective Altruism Funded the “AI Existential Risk” Ecosystem with Half a Billion Dollars

Effective Altruism Funded the “AI Existential Risk” Ecosystem with Half a Billion Dollars

Open link in next tab

Effective Altruism Funded the “AI Existential Risk” Ecosystem with Half a Billion Dollars

https://www.aipanic.news/p/effective-altruism-funded-the-ai

The “AI Existential Safety” field did not arise organically. Effective Altruism invested $500 million in its growth and expansion.

"it’s like the stages of a rocket ship and racism was the first stage"

"it’s like the stages of a rocket ship and racism was the first stage"

To what extent did Eliezer Yudkowsky invent the Effective Altruist movement?

To what extent did Eliezer Yudkowsky invent the Effective Altruist movement?

Open link in next tab

The history of the term 'effective altruism' — EA Forum

https://forum.effectivealtruism.org/posts/9a7xMXoSiQs3EYPA2/the-history-of-the-term-effective-altruism?commentId=ZrmDoHxHavJrLh94a

Comment by Dale - Interesting history! However, I think you are being unfair to MIRI. Eliezer was using the term as as far back as 2007, four years before you mention it first being used in Oxford. So it wasn't originated in Oxford. And given that many CEA members have read LessWrong, including Toby Ord, it's seems a stretch to even say it was independently re-invented.

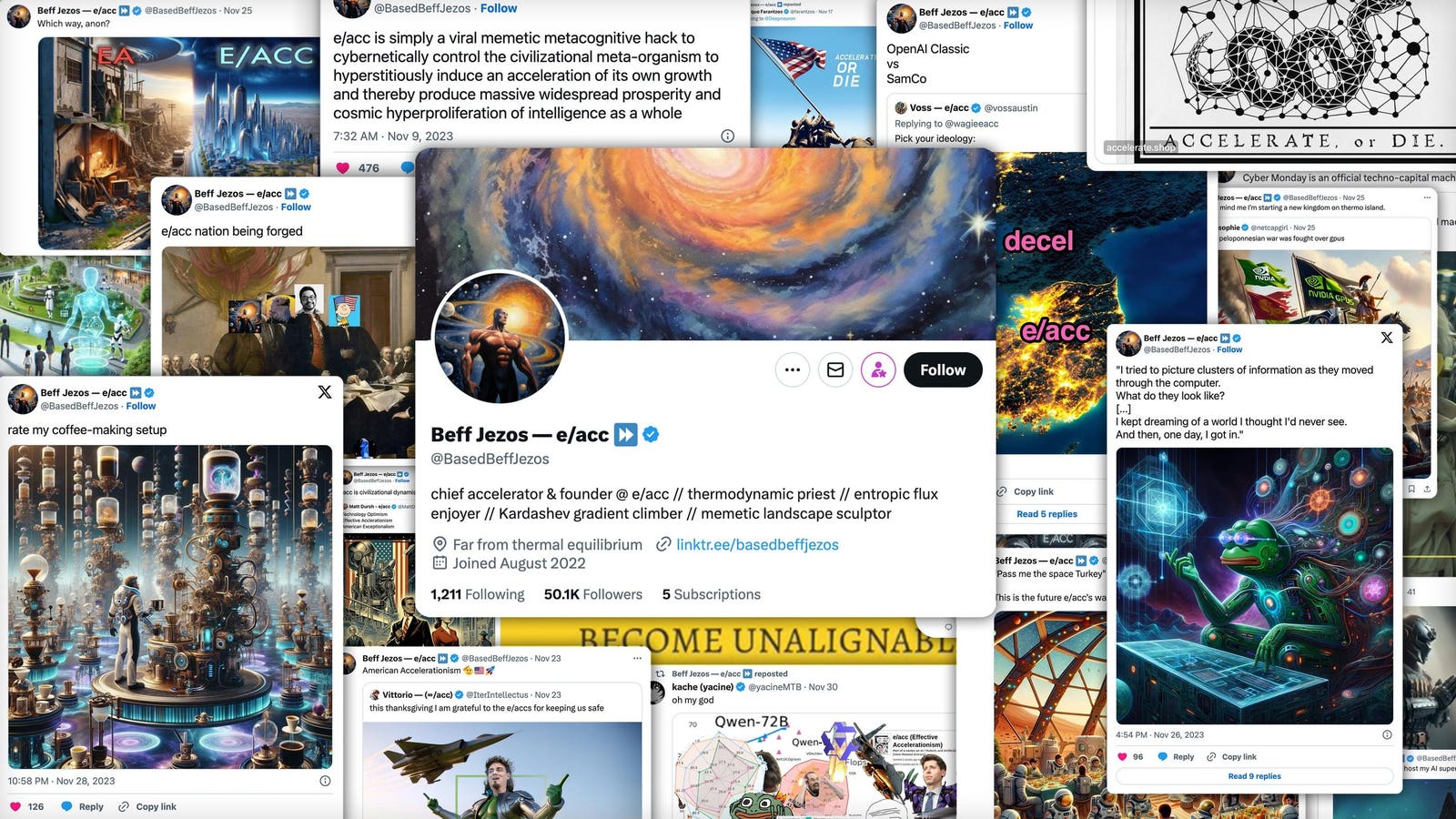

Who Is @BasedBeffJezos, The Leader Of The Tech Elite’s ‘E/Acc’ Movement?

Who Is @BasedBeffJezos, The Leader Of The Tech Elite’s ‘E/Acc’ Movement?

Open link in next tab

Who Is @BasedBeffJezos, The Leader Of The Tech Elite’s ‘E/Acc’ Movement?

https://www.forbes.com/sites/emilybaker-white/2023/12/01/who-is-basedbeffjezos-the-leader-of-effective-accelerationism-eacc

A former Google engineer and the founder of stealth AI startup Extropic, is behind the Twitter account leading the “effective accelerationism” movement sweeping Silicon Valley.

The Inside Story of Microsoft’s Partnership with OpenAI

The Inside Story of Microsoft’s Partnership with OpenAI

Open link in next tab

https://archive.is/s2CdK