[AskLemmy] Finetuning Llama3.1 locally?

Anyone had any luck fine tuning Llama3.1 using a macbook and written a recent guide?

I've got some conversations I saved from interacting with Llama and have tweaked to be what I actually wanted and was wondering how best to go about fine tuning the model to see if this data makes it better at my use case (converting cloud formation to openTofu)

Thanks!

happy non-engineers will slowly transition into sad underpaid engineers

Zuck's new Llama is a beast

y2u.be/aVvkUuskmLY

Llama 3.1 (405b) seems 👍. It and Claude 3.5 sonnet are my go-to large language models. I use chat.lmsys.org. Openai may be scrambling now to release Chatgpt 5?

Marques Brownlee's latest vid is kinda unneeded

AI the Product vs AI the Feature

https://y2u.be/sDIi95CqTiM

The new Siri vs the RabbitR1 and Humane pinRabbit R1: https://youtu.be/ddTV12hErTc?si=tLR_GSXyRFtpgpJbHumane AI pin: https://youtu.be/TitZV6k8zfA?si=vI4mZMhN...

What If Someone Steals GPT-4 (LLM data)? | Asianometry [CC] (18:23)

What If Someone Steals GPT-4?

https://www.youtube.com/watch?v=HR2PUCAukbQ

Links:- The Asianometry Newsletter: https://www.asianometry.com- Patreon: https://www.patreon.com/Asianometry- Threads: https://www.threads.net/@asianometry-...

DALL-E 3 Release

DALL·E 3

https://openai.com/dall-e-3

DALL·E 3 understands significantly more nuance and detail than our previous systems, allowing you to easily translate your ideas into exceptionally accurate images.

Vicuna v1.5 Has Been Released!

Click Here to be Taken to the Megathread!

from !fosai@lemmy.world

Vicuna v1.5 Has Been Released!

Shoutout to GissaMittJobb@lemmy.ml for catching this in an earlier post.

Given Vicuna was a widely appreciated member of the original Llama series, it'll be exciting to see this model evolve and adapt with fresh datasets and new training and fine-tuning approaches.

Feel free using this megathread to chat about Vicuna and any of your experiences with Vicuna v1.5!

Starting off with Vicuna v1.5

TheBloke is already sharing models!

Vicuna v1.5 GPTQ

7B

13B

Vicuna Model Card

Model Details

Vicuna is a chat assistant fine-tuned from Llama 2 on user-shared conversations collected from ShareGPT.

Developed by: LMSYS

- Model type: An auto-regressive language model based on the transformer architecture

- License: Llama 2 Community License Agreement

- Finetuned from model: Llama 2

Model Sources

- Repository: https://github.com/lm-sys/FastChat

- Blog: https://lmsys.org/blog/2023-03-30-vicuna/

- Paper: https://arxiv.org/abs/2306.05685

- Demo: https://chat.lmsys.org/

Uses

The primary use of Vicuna is for research on large language models and chatbots. The target userbase includes researchers and hobbyists interested in natural language processing, machine learning, and artificial intelligence.

How to Get Started with the Model

- Command line interface: https://github.com/lm-sys/FastChat#vicuna-weights

- APIs (OpenAI API, Huggingface API): https://github.com/lm-sys/FastChat/tree/main#api

Training Details

Vicuna v1.5 is fine-tuned from Llama 2 using supervised instruction. The model was trained on approximately 125K conversations from ShareGPT.com.

For additional details, please refer to the "Training Details of Vicuna Models" section in the appendix of the linked paper.

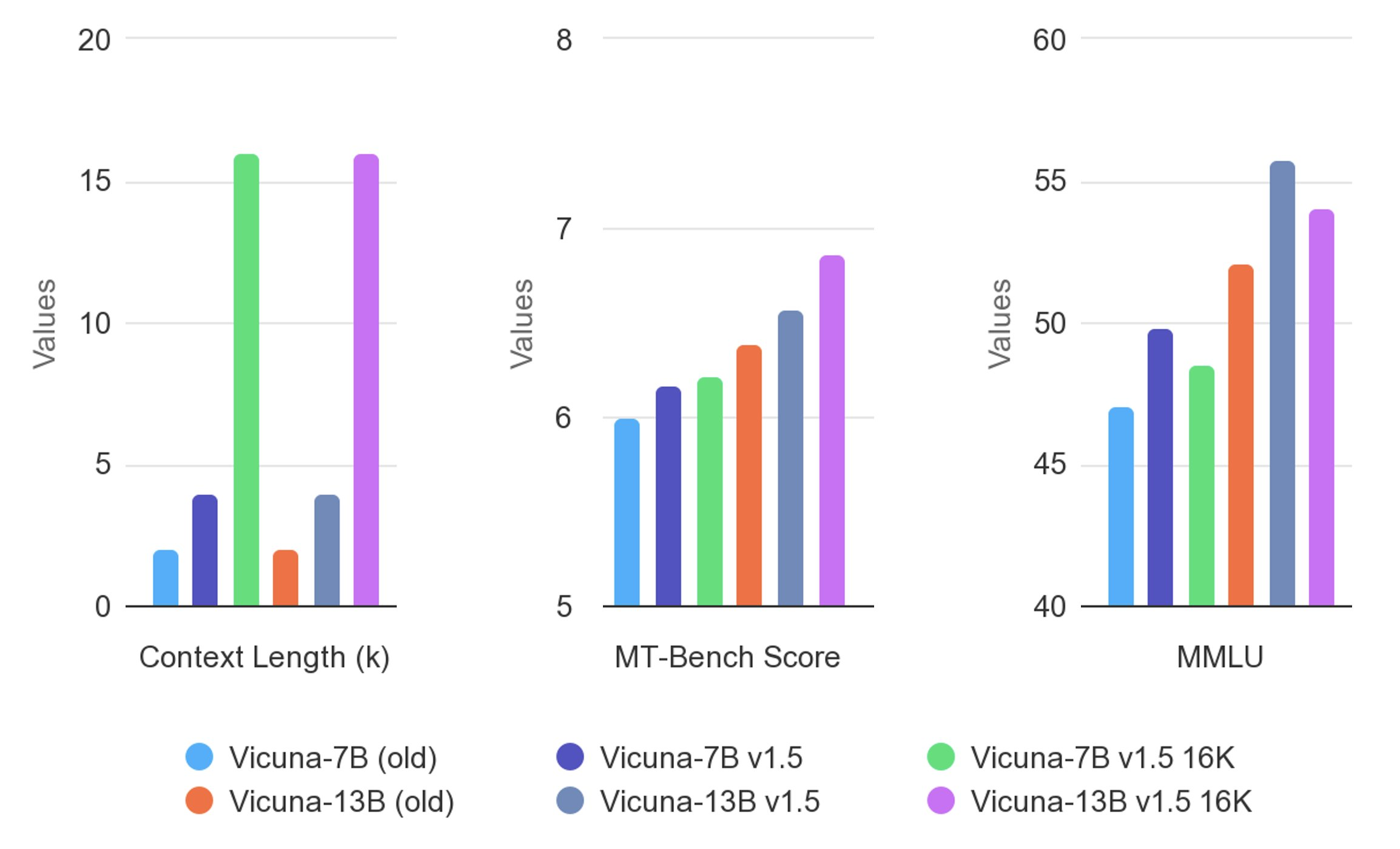

Evaluation Results

Vicuna is evaluated using standard benchmarks, human preferences, and LLM-as-a-judge. For more detailed results, please refer to the paper and leaderboard.

Hello c/llm

I noticed there didn't seem to be a community about large language models, akin to r/localllama. So maybe this will be it.

For the uninitiated, you can easily try a bleeding edge LLM in your browser here.

If you loved that, some places to get started with local installs and execution are here-

https://github.com/ggerganov/llama.cpp

https://github.com/oobabooga/text-generation-webui

https://github.com/LostRuins/koboldcpp

https://github.com/turboderp/exllama

and for models in general, the renowned TheBloke provides the best and fastest releases-