Free Open-Source Artificial Intelligence

!fosai

@lemmy.world

!fosai

@lemmy.worldMeta has released and open-sourced Llama 3.1 in three different sizes: 8B, 70B, and 405B

This new Llama iteration and update brings state-of-the-art performance to open-source ecosystems.

If you've had a chance to use Llama 3.1 in any of its variants - let us know how you like it and what you're using it for in the comments below!

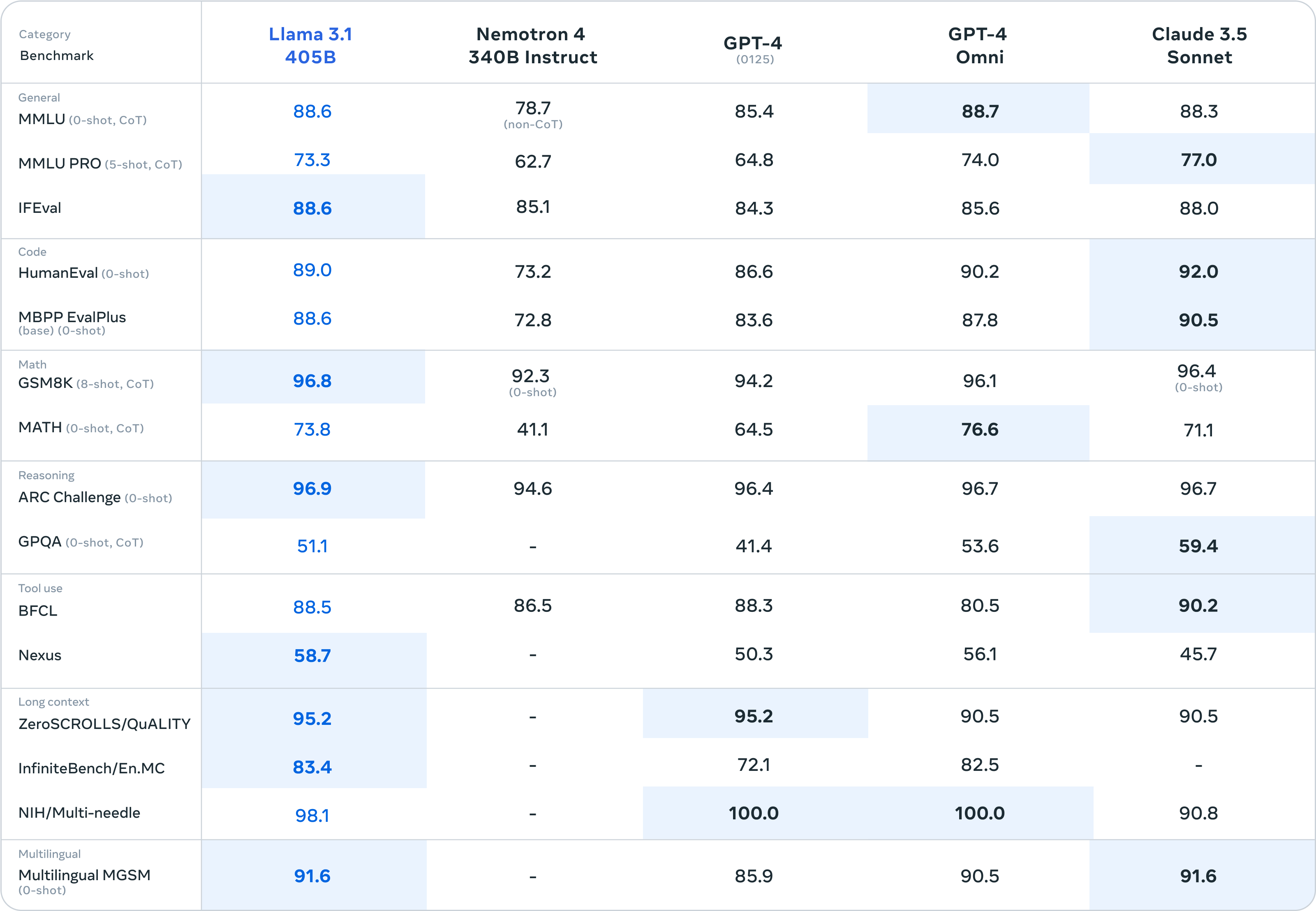

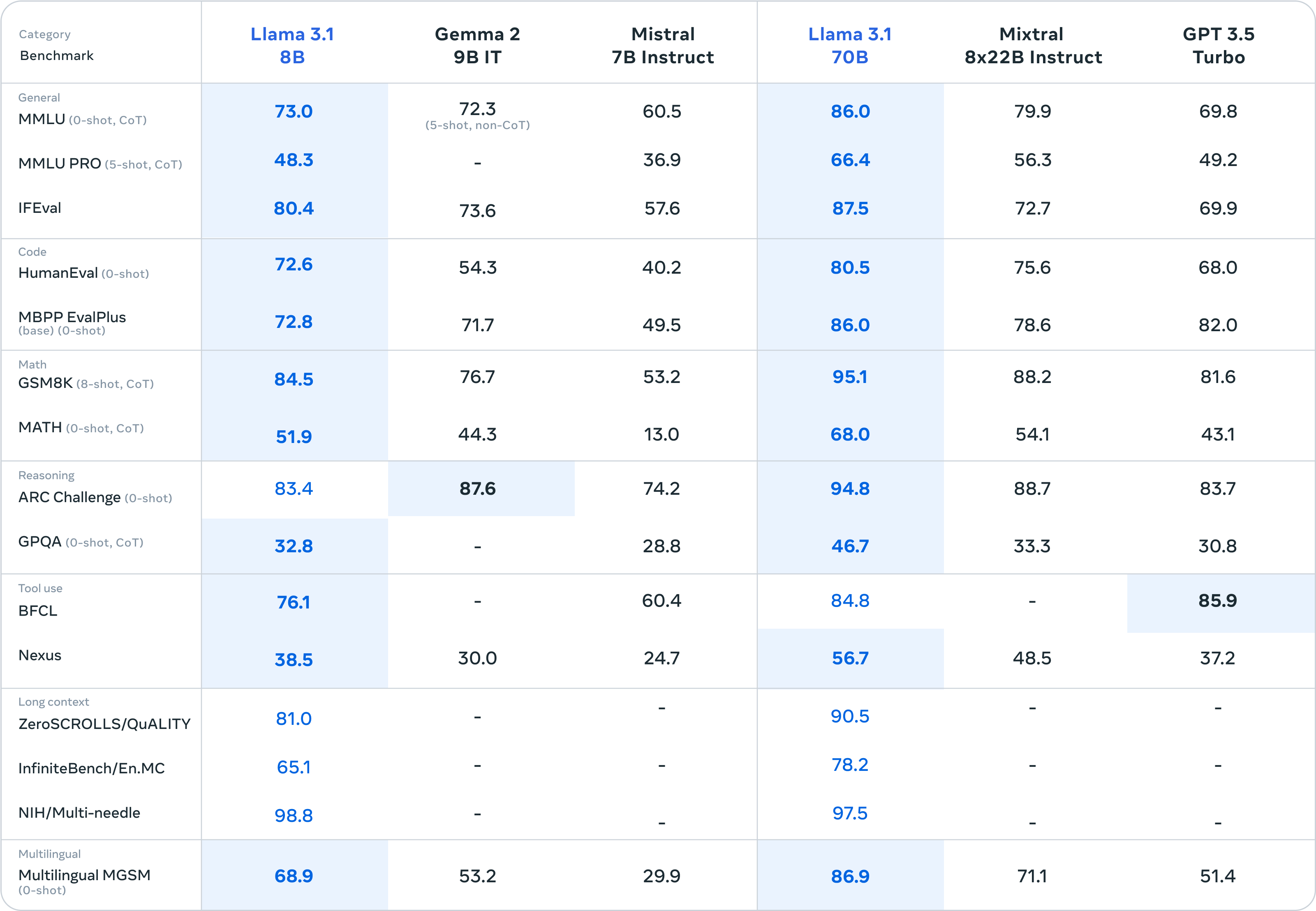

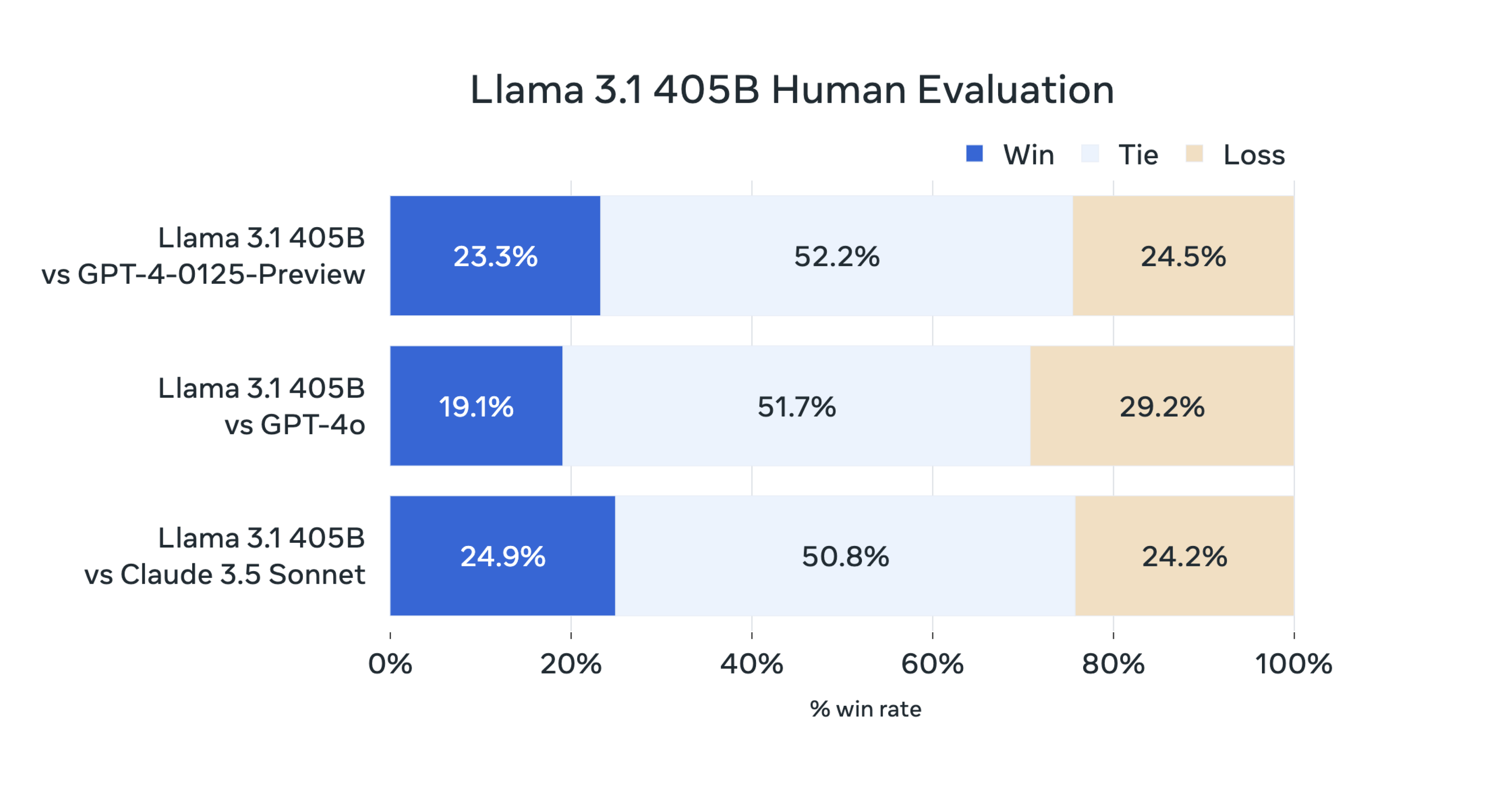

For this release, we evaluated performance on over 150 benchmark datasets that span a wide range of languages. In addition, we performed extensive human evaluations that compare Llama 3.1 with competing models in real-world scenarios. Our experimental evaluation suggests that our flagship model is competitive with leading foundation models across a range of tasks, including GPT-4, GPT-4o, and Claude 3.5 Sonnet. Additionally, our smaller models are competitive with closed and open models that have a similar number of parameters.

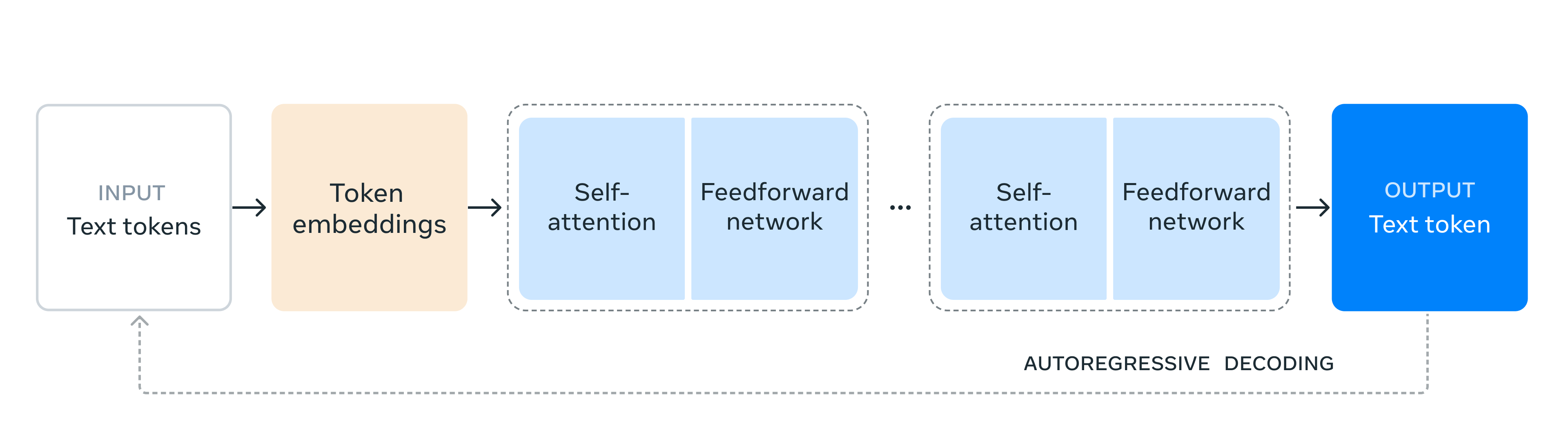

As our largest model yet, training Llama 3.1 405B on over 15 trillion tokens was a major challenge. To enable training runs at this scale and achieve the results we have in a reasonable amount of time, we significantly optimized our full training stack and pushed our model training to over 16 thousand H100 GPUs, making the 405B the first Llama model trained at this scale.

See also: The Llama 3 Herd of Models paper here:

8BMeta-Llama-3.1-8B

Meta-Llama-3.1-8B-Instruct

Llama-Guard-3-8B

Llama-Guard-3-8B-INT8

70BMeta-Llama-3.1-70B

Meta-Llama-3.1-70B-Instruct

405BMeta-Llama-3.1-405B-FP8

Meta-Llama-3.1-405B-Instruct-FP8

Meta-Llama-3.1-405B

Meta-Llama-3.1-405B-Instruct

You can download the models directly from Meta or one of our download partners: Hugging Face or Kaggle.

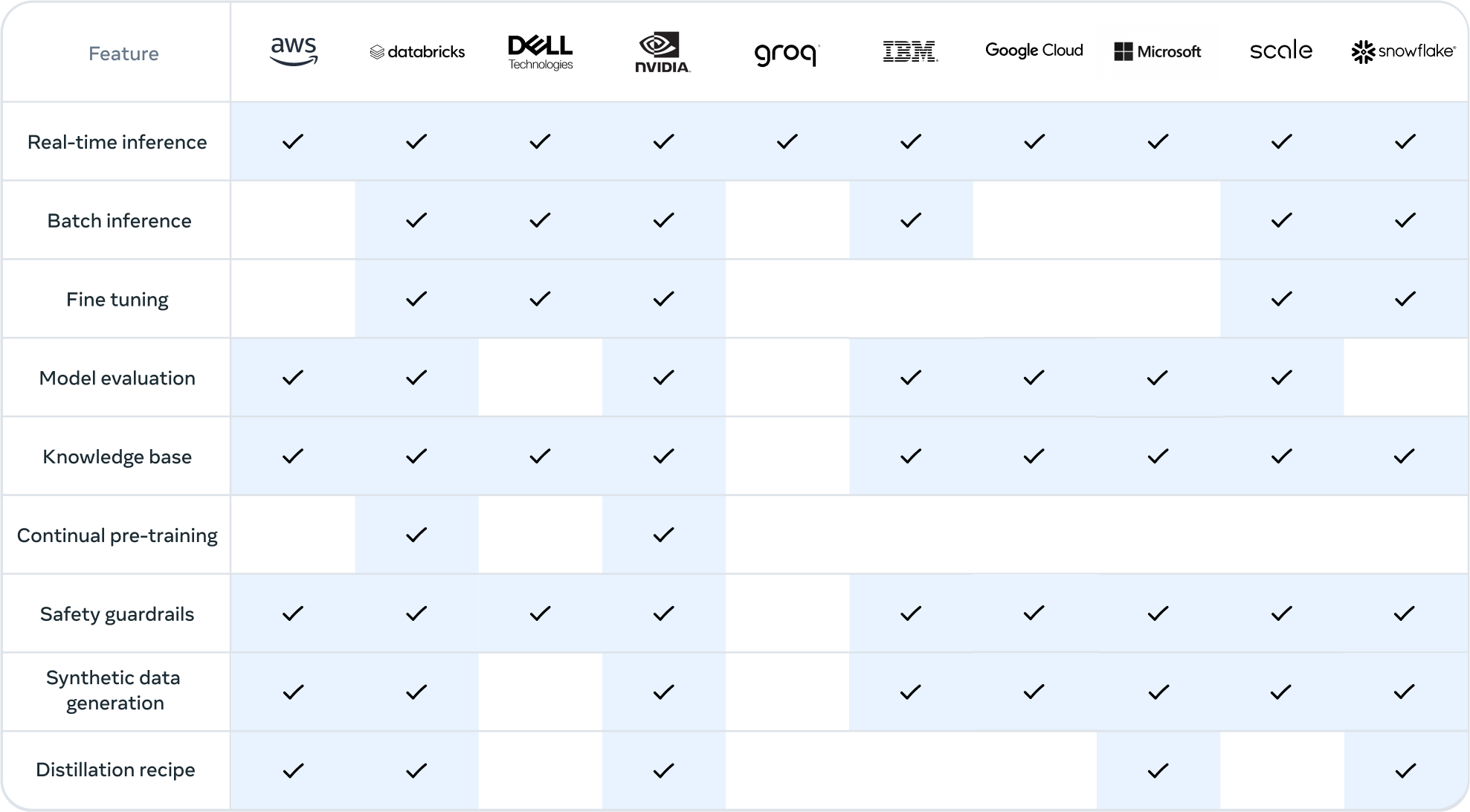

Alternatively, you can work with ecosystem partners to access the models through the services they provide. This approach can be especially useful if you want to work with the Llama 3.1 405B model.

Note: Llama 3.1 405B requires significant storage and computational resources, occupying approximately 750GB of disk storage space and necessitating two nodes on MP16 for inferencing.

Learn more at:

LinuxWindowsMacCloudHow-to Fine-tune Llama 3.1 models

Quantizing Llama 3.1 models

Prompting Llama 3.1 models

Llama 3.1 recipes

Rowan Cheung - Mark Zuckerberg on Llama 3.1, Open Source, AI Agents, Safety, and more

Matthew Berman - BREAKING: LLaMA 405b is here! Open-source is now FRONTIER!

Wes Roth - Zuckerberg goes SCORCHED EARTH.... Llama 3.1 BREAKS the "AGI Industry"*

1littlecoder - How to DOWNLOAD Llama 3.1 LLMs

Bloomberg - Inside Mark Zuckerberg's AI Era | The Circuit

https://qwenlm.github.io/blog/qwen2.5/

GITHUB HUGGING FACE MODELSCOPE DEMO DISCORD Introduction In the past three months since Qwen2’s release, numerous developers have built new models on the Qwen2 language models, providing us with valuable feedback. During this period, we have focused on creating smarter and more knowledgeable language models. Today, we are excited to introduce the latest addition to the Qwen family: Qwen2.5. We are announcing what might be the largest opensource release in history!

Does Llama3 use any other model for generating images? Or is it something that llama3 model can do by itself?

Can Llama3 generate images with ollama?

https://huggingface.co/CohereForAI/c4ai-command-r-08-2024

We’re on a journey to advance and democratize artificial intelligence through open source and open science.

https://miniza.pages.dev/opensource/israeli-voice-recognition-startup-unveils-model-faster-than-openai

Hi everybody, I find a huge part of my job is talking to colleagues and clients and at the end of those phone calls, I have to write a summary of what happened, plus any key points that I need to focus on followup.

I figured it would be an excellent task for a LLM.

It would need intercept the phone call dialogue, and transcribe the dialogue.

Then afterwards I would want to summarize it.

I'm not talking about teams meetings or anything like that, I'm talking a traditional phone call, via a mobile phone to another phone.

I understand that that could be two different pieces of software, and that would be fine, but I am wondering if there is any such tool out there, or a tool in the making?

If you have any leads, I'd love to hear them.

Thank you so much

https://www.youtube.com/watch?v=zjkBMFhNj_g

Auf YouTube findest du die angesagtesten Videos und Tracks. Außerdem kannst du eigene Inhalte hochladen und mit Freunden oder gleich der ganzen Welt teilen.

https://mistral.ai/news/mistral-large-2407/

Today, we are announcing Mistral Large 2, the new generation of our flagship model. Compared to its predecessor, Mistral Large 2 is significantly more capable in code generation, mathematics, and reasoning. It also provides a much stronger multilingual support, and advanced function calling capabilities.

https://old.reddit.com/r/StableDiffusion/comments/1do5gvz/the_open_model_initiative_invoke_comfy_org/

https://www.nature.com/articles/d41586-024-02012-5

Many of the large language models that power chatbots claim to be open, but restrict access to code and training data.