This could be so much longer.

Killing children, class systems, so many programming language names, the ridiculous ways equality and order-of-operations are done sometimes. Plenty of recursion jokes to be made. Big O notation. Any other ideas?

GOTO is the only thing that makes sense. It's the "high-level" concepts like for-loops, functions and list comprehension that ruined programming.

series.append(series[k-1]+series[k-2]) for k in range(2,5)]

RAVINGS DREAMT UP BY THE UTTERLY DERANGED

I started coding with TurboBasic, which included the helpful innovation of GOTO {label} instead of GOTO {line number}, which allowed you to have marginally-better-looking code like:

GOTO bob

...

bob:

{do some useless shit}

return

which meant you essentially had actual, normal methods and you didn't have to put line numbers in front of everything. The problem was that labels (like variables) could be as long as you wanted them to be, but the compiler only looked at the first two letters. Great fun debugging that sort of nonsense.

Masters and slaves

Cloning

Deploying code (that's what you do with soldiers!!!1)

Using Git to rewrite history.

Atomic values (like the bomb!)

These people are madmen.

One of the slave node's child process failed, so the master node sent a signal to terminate the child and restart the slave

There's pretty solid reason my research group is pushing to use "head node and executor nodes" nomenclature rather than the old-school "master node and slave nodes" nomenclature, haha

The only reason I enjoy C++ is because I can cast destroy on children and it's parents if they're present in the world

They always must add up - if they added down then they wouldn't be floating points now would they!

This is basically what the bank are doing when you get a loan.

When you get a $25k loan from a bank the banker does not take money from somewhere to put it in your bank account. The banker basically just add a +25k in your bank account that comes from nowhere.

Depending on what your coworker actually intended to do, you might want to let them know that printers have features built in to make their output traceable, specifically intended for catching counterfeiters.

That's one of these things that sounds like a crazy conspiracy theory the first time you hear about it, but it's true

Edit: haven't actually clicked the link. I mean the yellow dots which the printer makes which is directly traceable to your printer: https://en.m.wikipedia.org/wiki/Machine_Identification_Code

Basically all printer manufacturers entered a secret contract with governments.

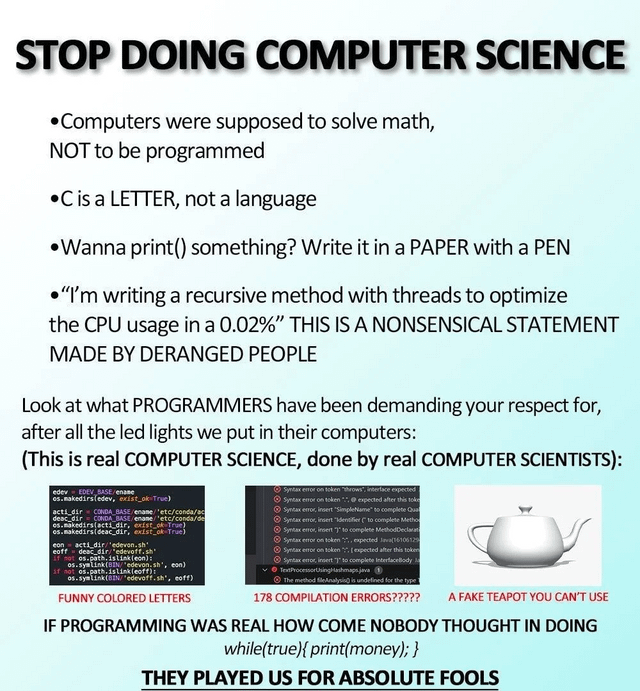

Thanks! In computer graphics it's referred to as the "Utah teapot" because the 3D model was created at the University of Utah. But it was originally a Melitta brand teapot. It is still manufactured by German company Friesland, which I bought it from.

Unfortunately it appears they recently had a fire and their webshop is temporarily closed, but I think you can also get it off of Amazon.

I read once that the original model didn't have a bottom surface? Idk but I suspect that's why it's referred to as useless in the meme.

I'm more of a computer-science geek than a tea geek, so all I can say is that it pours without spilling. You won't get a laminar flow out of it or anything like that.

Enough people have thought of while (true){ print(money); } for manufacturers to have built stuff into printers to prevent that, alas.

Indeed. The amount of work that's went into the prevention and ways to identify who's done it is not insignificant.

Can someone explain this joke to me

"I'm writing a recursive method with threads to optimize the CPU usage in a 0.02%"

I understand everything apart from the "in a 0.02%". What does that mean? How can something be in a percentage?

It's a double joke. For programmers, it's pretty useless unless your in high performance computing.

If you're on the nitty gritty OS or CPU itself, 0.02% optimization can mean significant improvememt of different things but because it is otherwise unitless, it is equally useless to the reader.

Increasing the CPU optimization by 0.02% does seem crazy to me. If you're going to spend time working on something, make it worthwhile. Also, isn't while(true) {print(money)} Microsoft, Apple and Amazon:s business model?

I mean if the CPU that's running these instructions is super low power then 0.02 might be worth it

Or if you're scaling a large cluster of CPUs for parallel computations where a 0.02% increase can make a tangible difference in runtimes.

Only if you'd removed and fixed all other bottlenecks that would gain you more than 0.02%. And I'm not convinced there are many if any projects of any reasonable size where that has been the case.

while{true}{print "money"};

moneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoney

moneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoneymoney

In programming things are 0 indexed, meaning one of something is 0, two of something is 1, etc.

I always wonder what the original post was. Something like "Stop doing science!" or some shit but seriously rather than sarcastically.

Funnily enough, helical apple slicers can easily produce the shape depicted in the bottom quote, making it a not unreasonable request.

I see you met my boss.

Not actually the case, but I am frustrated with them right now for not understanding the value of preventative work and R&D (I'm a Data Scientist).

Well, they could be talking about a computer science paper. Otherwise, it should be "Write it on paper" (no a).

Socrates said books were dumbing down humanity because, since people could just look things up in books they wouldn’t have to memorise information anymore, and that made their brains soft.

Ever since society began, some people have been convinced the next generation’s technology was going to be society’s downfall, whether it was Socrates’ books, the telegraph in the 1800s, radio, the (land line) telephone, dishwashers (women will become lazy and unsuitable wives and mothers), screened windows (society will collapse because you won’t hear your neighbours and pedestrians on the street, we’ll all become hermits and die holed up in our homes), comic books would rot the brains of the youth, then music, then video games… it goes on and on.

So far, those predictions have never been true. Every older generation freaks out when the ones after come of age. It’s like societal growing pains.