I think every touch up besides color correction and cropping should be labeled as "photoshopped". And any usage of AI should be labeled as "Made with AI" because it cannot show which parts are real and which are not.

Besides, this is totally a skill issue. Removing this metadata is trivial.

Some of the more advanced color correction tools can drastically change an image. There’s a lot of gray in that line as well.

DOD Imagery guidelines state that only color correction can be applied to "make the image appear the same as it was when it was captured" otherwise it must be labeled "DOD illustration" instead of "DOD Imagery"

Sure But you could also achieve a similar effect in-camera by zooming in or moving closer to the subject

A lot of photographers will take a photo with the intention of cropping it. Cropping isn’t photoshopping.

You don’t have to open photoshop to do it. Any basic editing software will include a cropping tool.

Yes. I think the question was should it be labeled as “photoshopped” (or probably “manipulated”). I don’t think it should. I think those labels would be meaningless if you can’t event change the aspect ratio of a photo without it being called “photoshopped”.

There are absolutely different levels of image editing. Color correction, cropping, scale, and rotation are basic enough that I would say they don’t even count as alterations. They’re just correcting what the camera didn’t, and often available in the camera's built in software. (Fun fact, what the sensor sees is not what it presents you in a jpeg.) Then there are more deceptive levels of editing, like removing or adding objects, altering someone’s appearance, swapping faces from different shots. Those are definitely image alterations, and what most people mean when they say an image is “photoshopped” (and you know that, don’t lie). Then there’s AI, where you’re just generating new information to put into the image. That’s extreme image alteration.

These all can be done with or without any sort of nefarious intent.

Agreed. Photo editing has great applications but we can't pretend it's never used maliciously.

Film too, any trickery in the darkroom should be labeled because it cannot show which parts are real and which are not.

Why label it if it is trivial to avoid the label?

Doesn't that mean that bad actors will have additional cover for misise of AI?

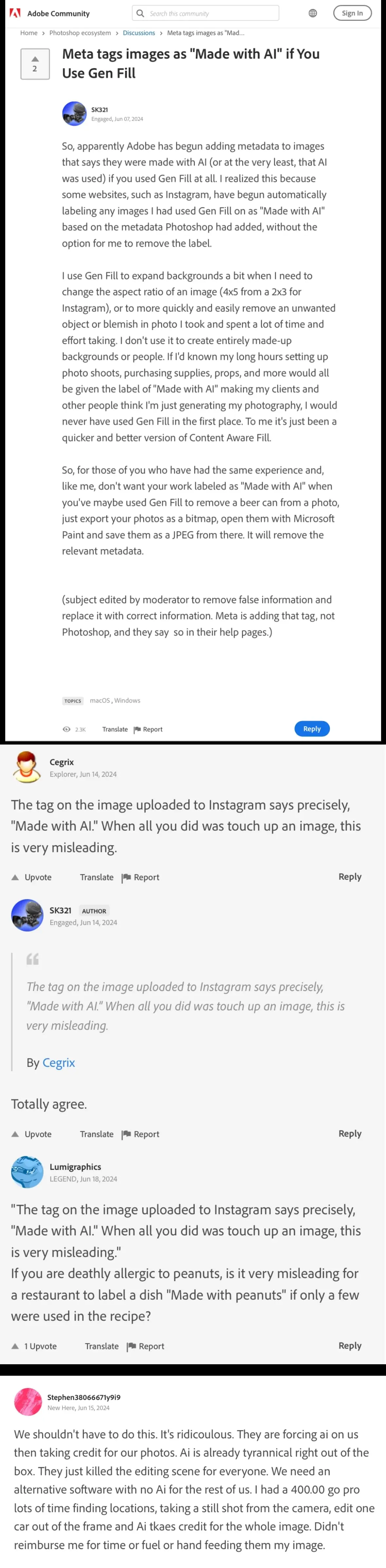

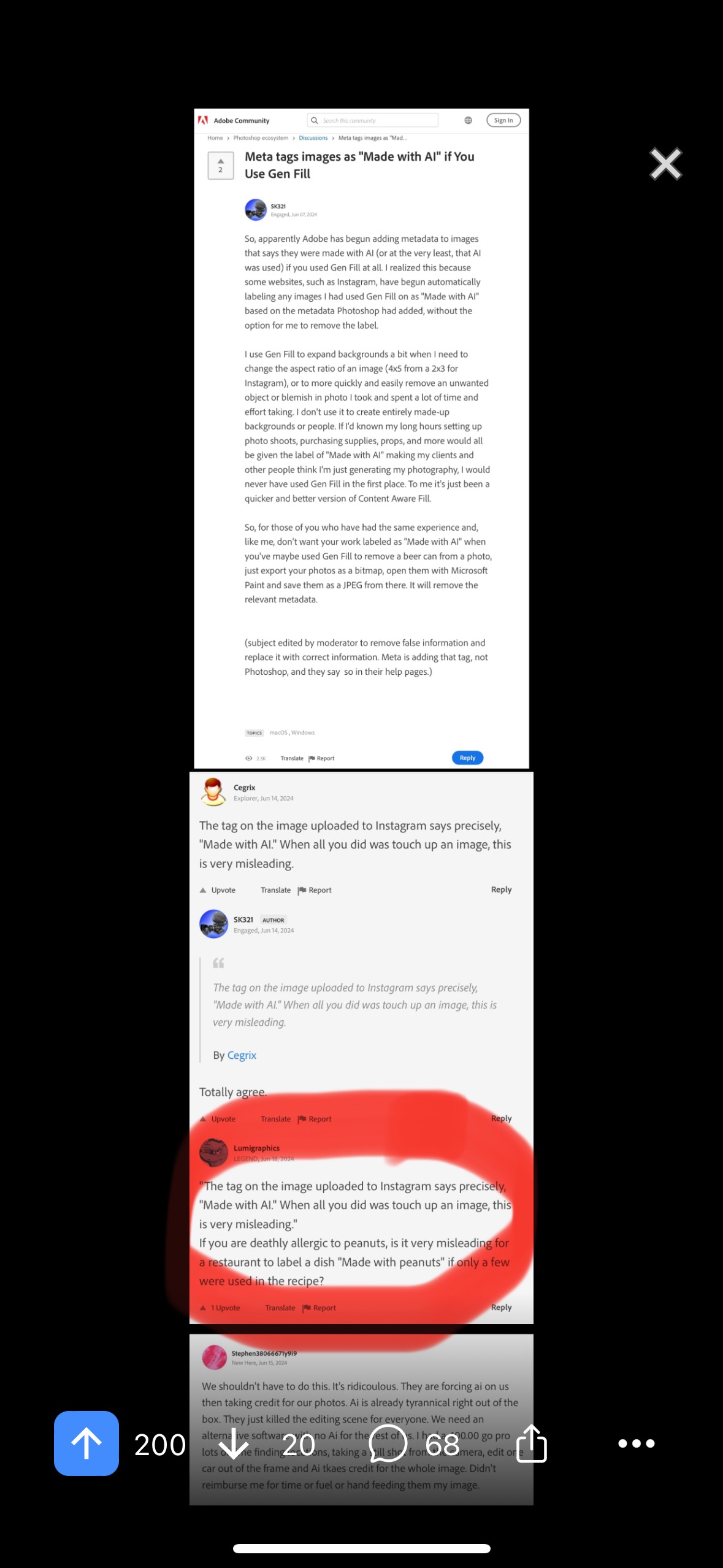

People are complaining that an advanced fill tool that’s mostly used to remove a smudge or something is automatically marking a full image as an AI creation. As-is if someone actually wants to bypass this “check” all they have to do is strip the image’s metadata before uploading it.

Right? I thought I went crazy when I got to "I just used Generative Fill!" Like, he didn't just auto adjust the exposure and black levels! C'mon!

I totally agree with a streamlined identification of images generated by an AI prompt. But, to label an image with "made with AI" metadata when the image is original, taken by a human, and simply used AI tools to edit is absolutely misleading and the language can create confusion. It is not fair to the individual who has created the original work without the use if generative AI. I simply propose revising the language to create distinction.

Where I live, is very difficult to get permits to knock down an old building and build a new one. So, builders will "renovate" by knocking down everything but a single wall and then building a new structure around it.

I can imagine people using that to get around the "made with ai" label. I just touched it up!

It’s like they’re ignoring the pixel I captured in the bottom left!

Really interesting analogy.

Also I imagine most anybody who gets a photo labeled will find a trick before making their next post. Copy the final image to a new PSD… print and scan for the less technically inclined… heh

Or generated with AI like midjourney, therefore, made with AI.

There a huge difference between the two, yet, no clear distinction when all lumped into the label of "made with AI"

yeah, i use Lightroom ai de-noise all the time now. it's just a better version of a tool that already existed. and once that every phone does by default anyway.

And I use AI to determine the right brightness level for my phone screen (that was a feature added several android versions ago)

Artists in 2023: "There should be labels on AI modified art!!"

Artists in 2024: "Wait, not like that..."

no, they just replaced the normal tools with ai-enhanced versions and are labeling everything like that now.

ai noise reduction should not get this tag.

I don't know where you got they from, but this post literally talks about tools such as the gen fill (select a region, type what you want in it, AI image generation makes it and places it in)

No - I don't agree that they're completely different.

"Made by AI" would be completely different.

"Made with AI" actually means pretty much the exact same thing as "AI was used in this image" - it's just that the former lays it out baldly and the latter softens the impact by using indirect language.

I can certainly see how "photographers" who use AI in their images would tend to prefer the latter, but bluntly, fuck 'em. If they can't handle the shame of the fact that they did so they should stop doing it - get up off their asses and invest some time and effort into doing it all themselves. And if they can't manage that, they should stop pretending to be artists.

I think it is a bit of an unclear wording personally. "Made with", despite technically meaning what you're saying, is often colloquially used to mean "fully created by". I don't mind the AI tag, but I do see the photographers point about it implying wholesale generation instead of touchups.

or... don't use generative fill. if all you did was remove something, regular methods do more than enough. with generative fill you can just select a part and say now add a polar bear. there's no way of knowing how much has changed.

there's a lot more than generative fill.

ai denoise, ai masking, ai image recognition and sorting.

hell, every phone is using some kind of "ai enhanced" noise reduction by default these days. these are just better versions of existing tools than have been used for decades.

Can't wait for people to deliberately add the metadata to their image as a meme, such that a legit photograph without any AI used gets the unremovable made with ai tag

Why many word when few good?

Seriously though, "AI" itself is misleading but if they want to be ignorant and whiny about it, then they should be labeled just as they are.

What they really seem to want is an automatic metadata tag that is more along the lines of "a human took this picture and then used 'AI' tools to modify it."

That may not work because by using Adobe products, the original metadata is being overwritten so Thotagram doesn't know that a photographer took the original.

A photographer could actually just type a little explanation ("I took this picture and then used Gen Fill only") in a plain text document, save it to their desktop, and copy & paste it in.

But then everyone would know that the image had been modified - which is what they're trying to avoid. They want everyone to believe that the picture they're posting is 100% their work.

We've been able to do this for years, way before the fill tool utilized AI. I don't see why it should be slapped with a label that makes it sound like the whole image was generated by AI.

This isn't really Facebook. This is Adobe not drawing a distinction between smart pattern recognition for backgrounds/textures and real image generation of primary content.

I don't think that's fair. AI wont turn a bad photograph into a good one. It's a tool that quickly and automatically does something we've been doing by hand untill now. That's kind of like saying a photoshopped picture isn't "good" or "real". They're all photoshopped. Not a single serious photographer releases unedited photos except perhaps the ones shooting on film.

Even finns photographers touch up their photos, either during development by adjusting how long they sit in one or the chemical processes or by using different methods of shaking/mixing processes and techniques.

If they enlarge their negatives on photo paper they often have tools to add lightness and darkness to different areas of the paper to help with exposure, contrast and subject highlighting. AKA. Dodging and burning which is also available in most photo editing software today.

There are loads of things to do to improve developed photos and been something that has always been something that photographers/developers do. People who still go with the "Don't edit photos" BS are usually not very well informed about photo history and techniques of their photography inspirations.

The image looks like OP cherry picked some replies in the original thread. I wonder how many artists still want AI assisted art to be flagged as such.

EDIT The source is also linked under the images. They did leave out all the comments in favour of including AI metadata, but naturally they're there in the source linked under the images.

💯

Absolutely cherry picked. Let us know if you peruse the source:

Without cherry picking… imagine these will be resized to the point of illegibility:

It's unreasonable to make them illegible for no good reason; you could've included them as-is, possibly in multiple, smaller images. It's also far more common to just share a link rather than an image post, as we'll have to see the link anyway.

I didn't see the source, though, I've updated my comment for that.

He just won’t stop!

Aight I repeated “cherry picked” earlier… no:

“Curated.” Was happy to curate a few of the more interesting comments for our community.

If I weren’t so lazy I might’ve found another comment in favor of the labeling to bump up the screenshotted proportion of replies in support from the 25% seen in my OP. Still, think I did an aight job.

Okayyy night now haha

Thanks for the edit. We all love that intellectual honesty!

Don’t miss this absolute roast though:

Roasted and salted 🥜

Now -

1: I should’ve been more clear… those full screen screenshots are so enormous, Lemmy has to compress them for cost and UX reasons.

2: Screenshot over link is a very intentional choice. Even if you’re positive you would’ve clicked based on the title, there are some great responses in this thread that I guarantee you we would not have been blessed with if this post had been a link instead of an image.

Everyone is busy. Lots of us work away on keyboards all day, and we hop on here just to scroll casually. Some huge forum thread? Forget it! A little screenshot that has teasers and can be digested bit by bit, with the leading post in the image helping folks decide whether they care enough to read the rest of the image and furthermore to find a source? (either by an OP or commenter’s source link, or exact match web search of an OCR’d phrase from the image) That’s the best shot we have at easing in as many people as possible into a topic. (Do feel bad for the vision impaired, hopefully the source link is a decent standin.) But for 98% of us this is prob the way. Aight maybe 95%, you got a good community response to your comment :)

Thanks for chiming in m’lord

As a photographer I'm a bit torn on this one.

I believe AI art should definitely be labeled to minimize people being mislead about the source of the art. But at the same time the OP on the Adobe forums post did say they used it as any other tool for touching up and fixing inconsistencies.

If I were to for example arrange a photoshoot with a model and they happened to have a zit that day on their forehead of course I'm gonna edit that out. Or if I happened to have an assistant with me that got in the shot but I don't want to crop in making the background and feel of the photo tighter I would gladly remove that too. Sure Adobe already has the patch, clone and even magic eraser tool (Which also uses AI, that might or might not mark photos) to do these fix-ups but if I can use AI, that I hope is trained on data they're actually allowed to train on, I think I would prefer that because if I'm gonna spend 10 to 30 minutes fixing blemishes, zits and what not I'd much prefer to use the AI tools to get my job done quicker.

If the tools were however used to rigorously change, modify and edit the scene and subject then for sure, it might be best to add that.

Wouldn't it be better to not discourage the use of editing tools when those tools are used in a way that just makes one's job quicker? If I were to use Lightrooms subject quick selection, should it be slapped on then? Or if I were to use an editing preset created with AI that automatically adjusts the basic settings of an image and further my editing from that, should the label be created then? Or if I have a flat white background with some tapestry pattern and don't want to spend hours getting the alignment of the pattern just right as I try to fix a minor aspect ratio issue or want to get just a bit more breathing room on the subject and I use the mentioned AI tool in the OP.

Things OP mentioned in his post and the scenarios I mentioned are all things you can do without AI anyways it just takes a lot longer sometimes, there's no cheating in using the right tool for the right job IMO. I don't think it's too far off from someone who makes sculptures in clay uses an ice scream scoop with ridges to create texture or a Dremel to touch up and fix corners. Or a painter using different tools and brushes and scrapers to finish their painting.

Perhaps a better idea would be if we want to make the labels "fair" there should also be a label that the photo has been manipulated by a program in general or maybe add a percentage indicator to see how much of it has been edited specifically with AI. Slapping an "AI" label on someone because they decided to get equal results by using another tool to do normal touch-ups to a photo could potentially be damaging to ones career and credibility when it doesn't say how much of it was AI or in what reach, because now there's the chance someone might be looking for their next wedding photographer and be discouraged because of the bad rep regarding AI.

trained on data they're actually allowed to train on

That’s the ticket. For touchups, certainly, that’s the key: did theft help, or not?

Indeed, if the AI was trained based on theft it's neither right on their part or ethical on mine.

I did some searching but sadly don't have time to look into it more but there were some concerning articles that would suggest they have either used shady practices to get their training data or users having to manually check an opt out box in the app settings.

I can't make an opinion on it right now before looking into it more but my core argument about using AI itself in this manner, even if that data was your own on your own trained AI using allowed resources, I still believe somewhat holds.

I saw this coming from a mile away. We will now have to set standards for what's considered "made by AI" and "Made with AI"