Visit post

telep commented on Trying to understand Consent Forms, Cookies and Third-Party Vendors • •

Visit post

telep commented on Trying to understand Consent Forms, Cookies and Third-Party Vendors • •

Visit comment

thank you 🙏

I meant that if you look at the "purpose" section of each cookie the ones that are older than 180 days are the only ones that dont mention advertising. thinking they may be related to the "nessecary" or "required" cookies that some websites have. I would presume they have their own or altered version of the other cookies policies since they have different purposes.

apologies, I worded that poorly before.

Visit post

telep commented on Trying to understand Consent Forms, Cookies and Third-Party Vendors • •

Visit comment

while I am by no means an expert on this, my gut tells me that this is probably something to do with "nessecary" cookies vs advertising & tracking cookies. its a common loophole for other policies so I wouldnt be surprised if they had some way of circumventing the normal limitations for tracking because of "fraud protection" or the likes.

looking at the cookie descriptors, all of the 1825 day cookies are used to "store &/or access information on device refreshes". the shorter cookies are the only ones that also mention "measuring advertising & content performance".

Visit post

telep commented on Disable VPN while browsing casual or leave running? • •

Visit comment

ahhh I see what you mean.

your thoughts on spacing out your connections & isolating is smart. unfortunately if you connect from the same device & browser any government agency or dedicated company with a big enough dataset (google, meta, etc.) would still be able to link you regardless of you IP by browser fingerprint alone. this does make YouTube more specifically being linked to your exact browser fingerprint porblamatic in a high stakes situation. As it, as you said is linked to your identity.

for lower level tracking changing IP regularly is effective. however, instead of switching to your local IP it would be more privacy conscious to just switch to a different VPN server.

unfortunately if you are genuinely worried about government level surveillance or the likes u enter into territory where VPNs often no longer cut it (or at least can't truly be trusted too) as they are centralized & can be forced to make exceptions for law enforcement. traffic analysis is also easier, which makes time correlation deanonimization a more realistic risk when talking about government agencies specifically.

the tor + vpn debate is one that lots of people argue & is excedingly complicated. tor is generally more than enough, unless you are wanted by INTERPOL haha. if you are genuinely worried about suppressive government or world powers targeting you look further into tor, & do not connect directly to your ISP at all as that data is essentially up for grabs to local authorities (depending on locale).

for you specifically I would consider doing your more sensitive tasks in the tor browser without the VPN & then having your normal browser always on the VPN so they would be more difficult to correlate. anything torrent related is low enough stakes that I would imagine just about any proxy would suffice. hope this was helpful 🙏.

Visit post

telep commented on Disable VPN while browsing casual or leave running? • •

Visit comment

tldr; no, if you trust your vpn more than your ISP always use it, as any hit to fingerprinting is menial.

it really can't hurt much to always be using it. any fingerprinting metric it would give is outweighed by the hiding of your IP behind the proxy. this is the #1 unique identifier that is tied back to people/locations.

the other fingerprinting metrics also are still exposed anyway & could probably be linked back to "you" regardless of your IP changing if they wanted too.

if you are worried about fingerprinting look into some projects like mullvad, librewolf, or even tor. clearing cookies on quit &/or having a separate browser for permenant logins/tokens to live in is also a good mitigation technique.

Visit post

telep commented on What VPN are you using? • •

Visit comment

oh damn I see sorry u can't get the apps to work I guess I'll do a little digging on my side. in the mean time I hope I've been at least somewhat helpful haha 🙏

Visit post

telep commented on ... • •

Visit comment

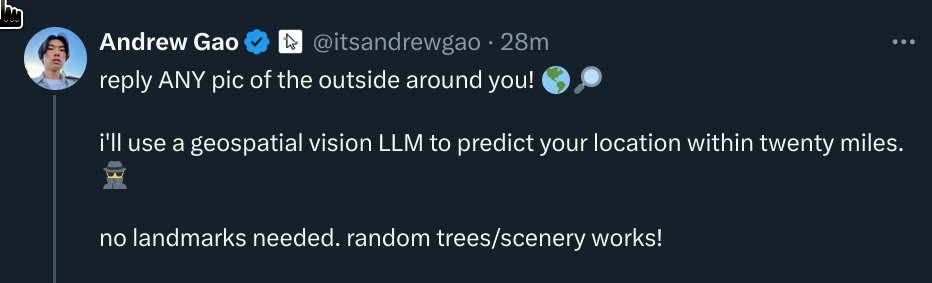

important second frame for context!

& no it isnt. quite sure twitter broke link previews a long time ago alongside guest accounts.

Visit post

telep commented on ... • •

Visit comment

this is extremely scary if true. are these algorithms obtainable by every day people? do they work only in heavily photographed areas or do they infer based on things like climate, foliage, etc? I would love some documentation on these tools if anyone has any.

Visit post

telep commented on What VPN are you using? • •

Visit comment

I would avoid trying to make sure you use the "official instance" as it kind of works against the projects purpose of decentralizing web requests. pinging around instances helps with avoiding outages as well. you can find active instances here on the uptime monitor.

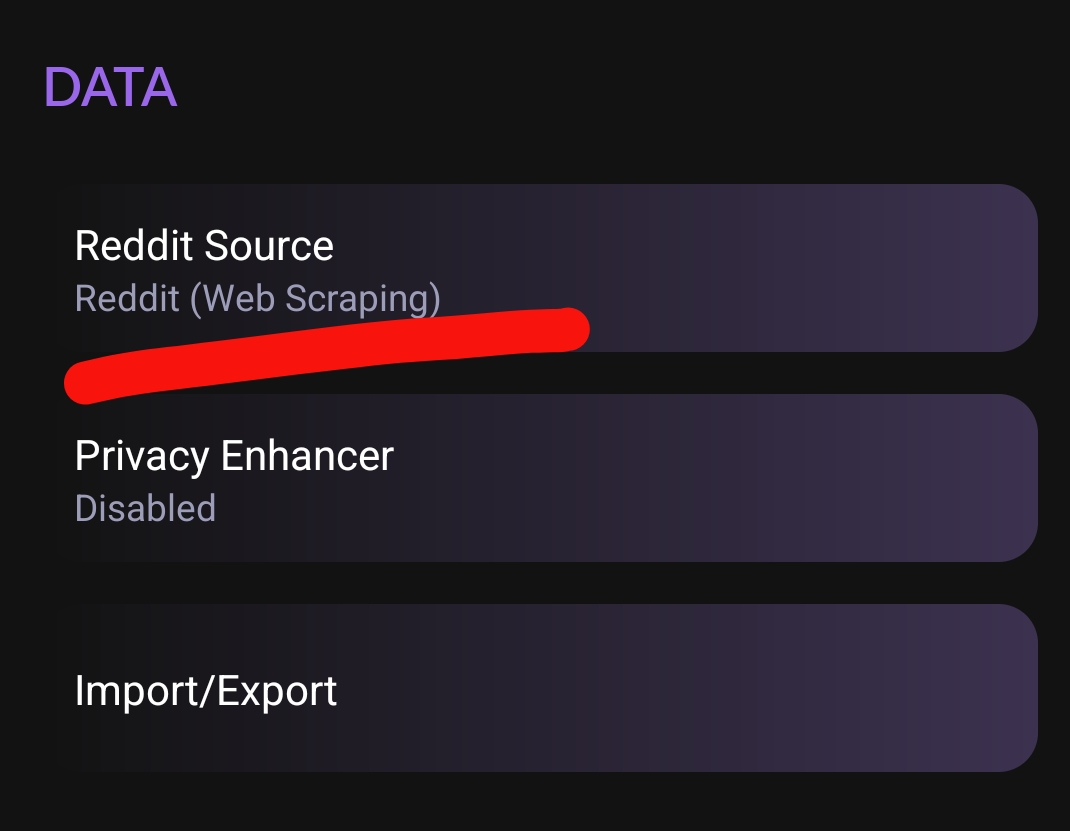

otherwise, stealth should work just fine if u turn on webscraping mode. I have been using it since the reddit changes with only very occasional issues, as similar to you I have some quams with alternative reddit clients.

Visit post

telep commented on What VPN are you using? • •

Visit comment

redlib is the updated version of libreddit & it seems to have circumvented the issues reddit caused so maybe consider giving it another try.

I think stealth also broke when they removed free API access. although, you can still use the webscraping mode by changing it in the settings I believe. if u want another option maybe try out RedReader or Geddit.