Nerd update 2/9/23

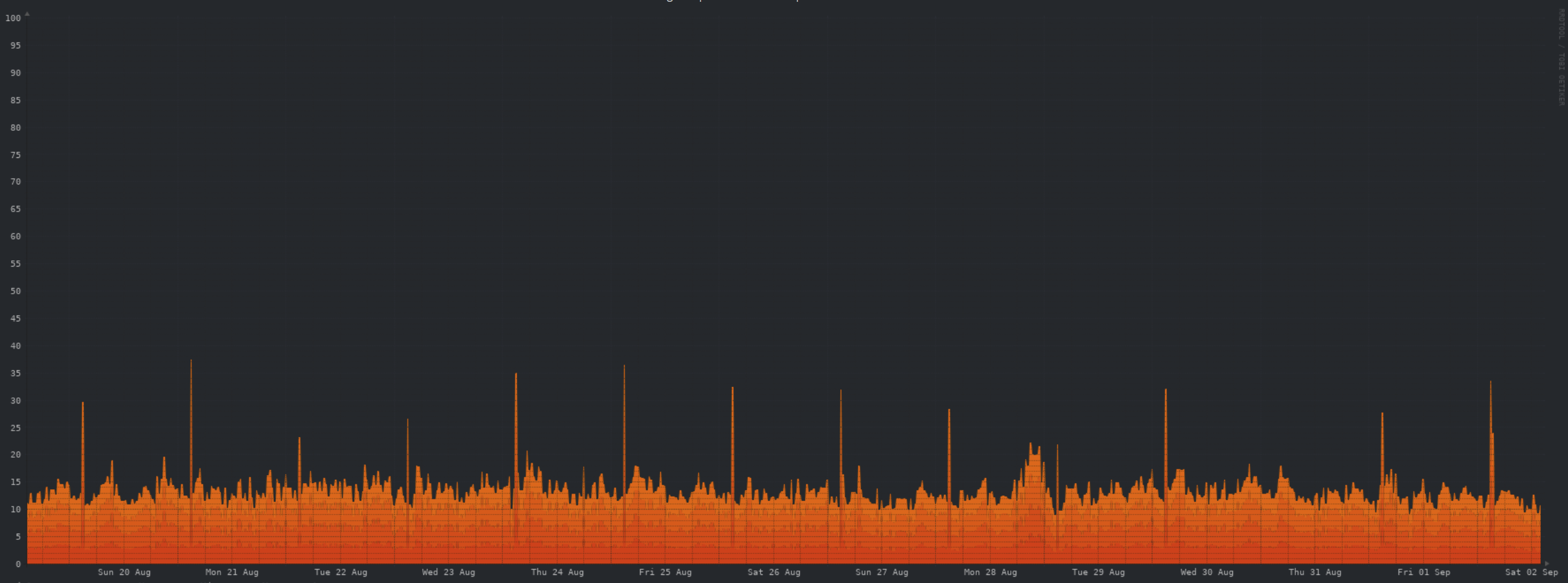

CPU:

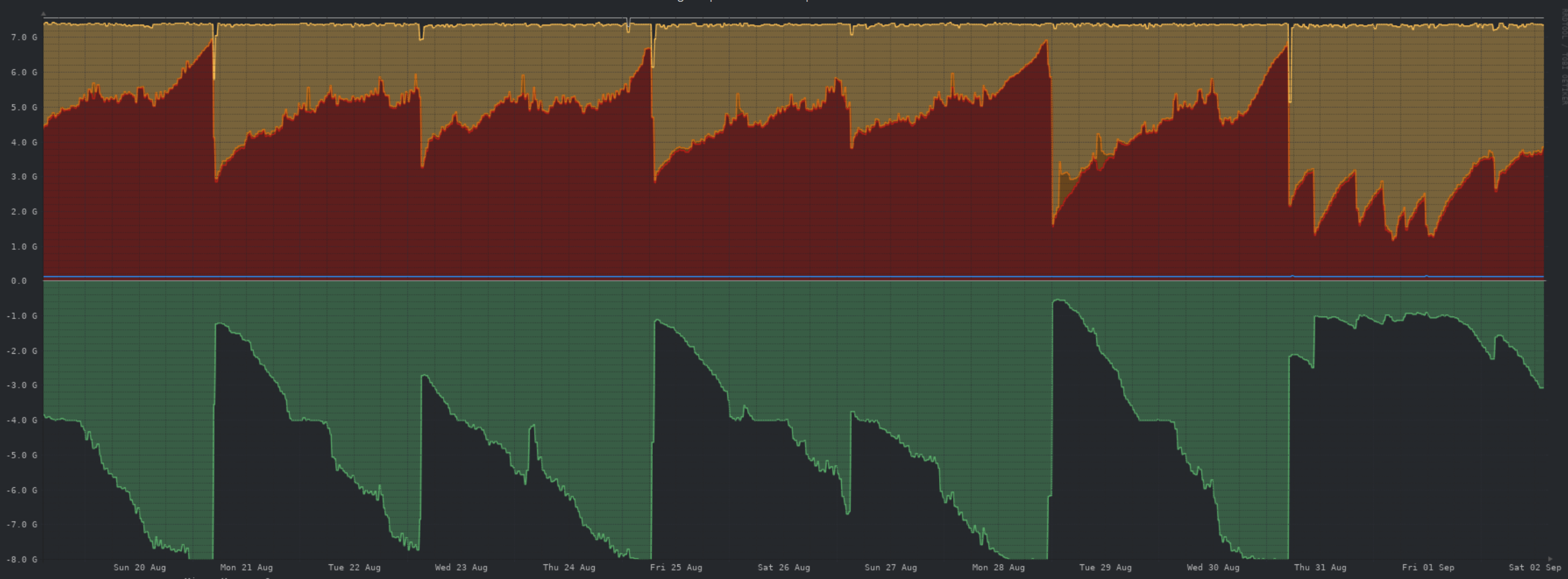

Memory:

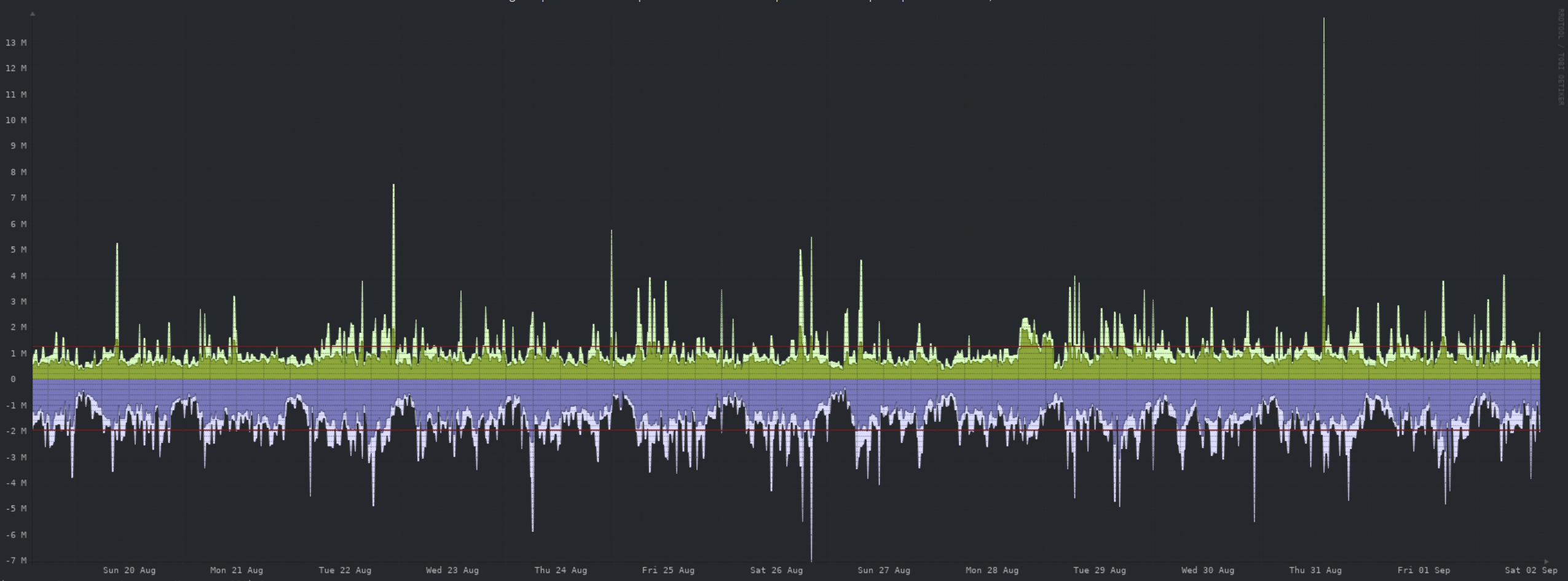

Network:

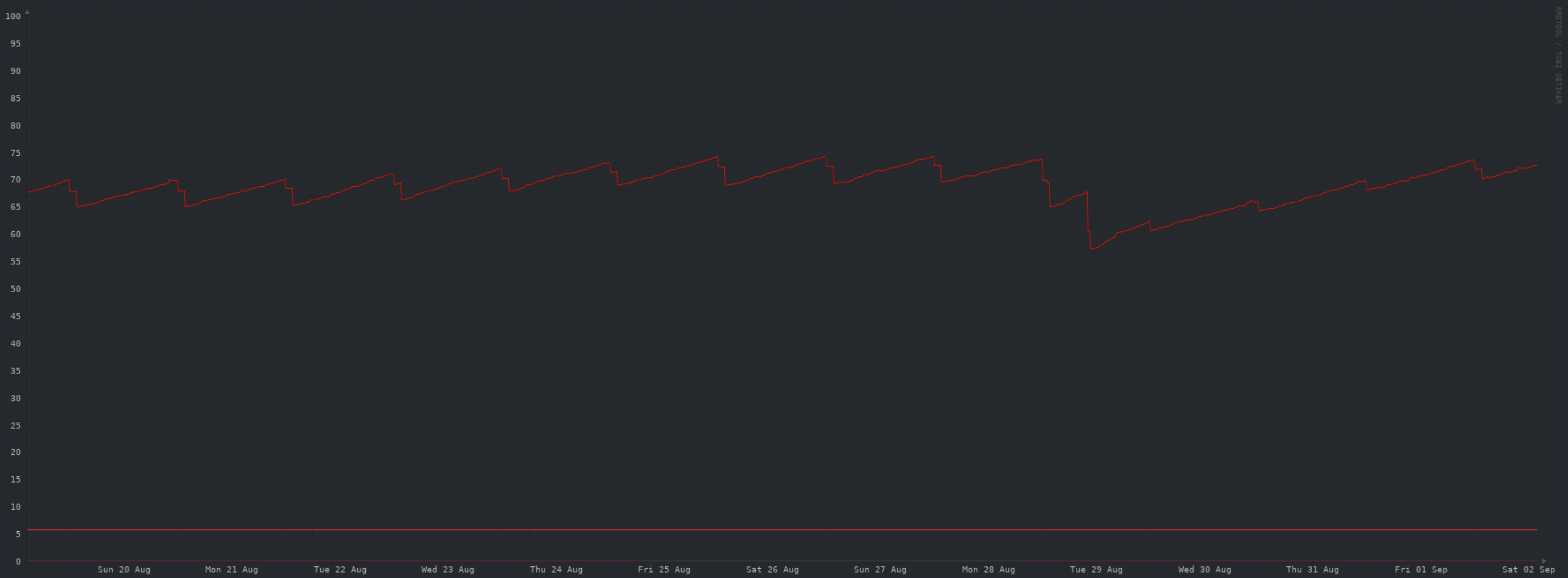

Storage:

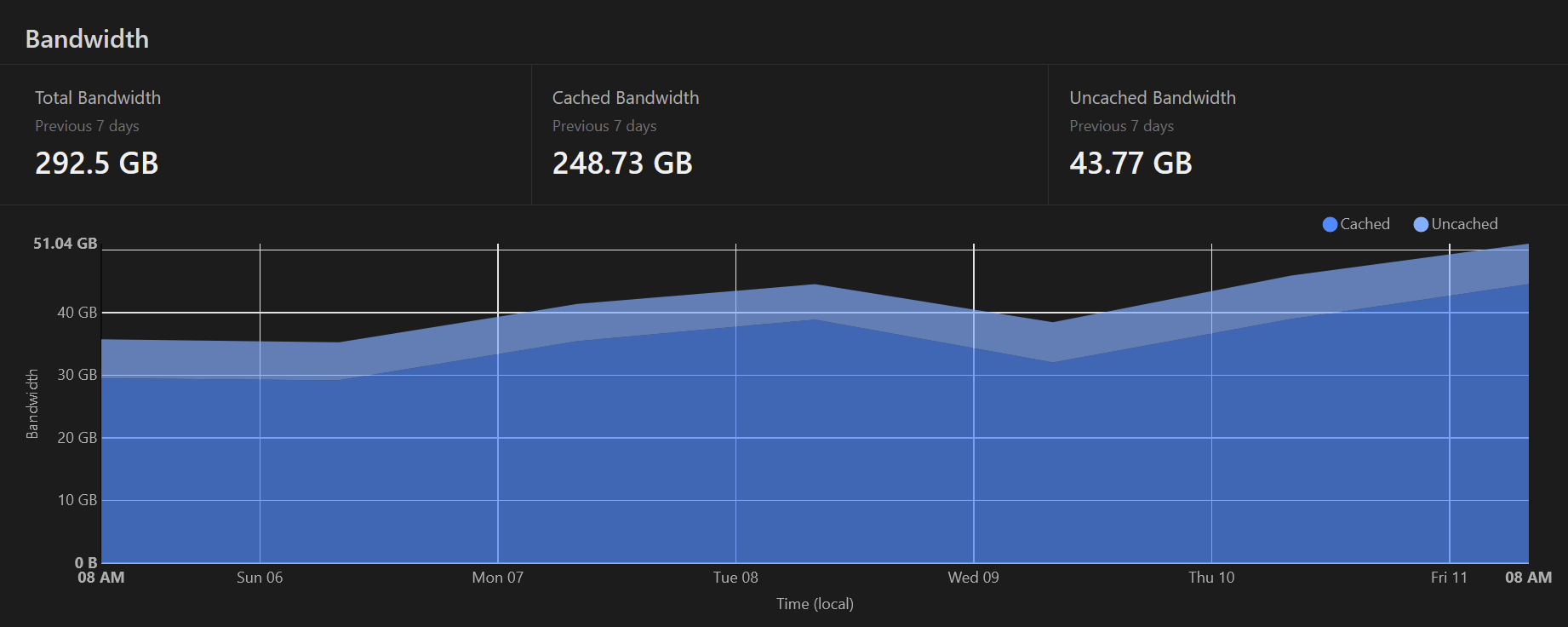

Cloudflare caching:

Summary:

Not much to call out. The storage drop was due to purging a days worth of images, and clearing the entire object storage cache. When I have time, I'll upgrade the VPS to add storage.

Broken images

Due to some disgusting behaviour, I've kicked off the process of deleting ALL images uploaded in the last day.

You will likely see broken images etc on aussie.zone for posts/comments during this period of time.

Apologies for the inconvenience, but this is pretty much the nightmare scenario for an instance. I'd rather nuke all images ever than to host such content.

Nerd update 13/8/23

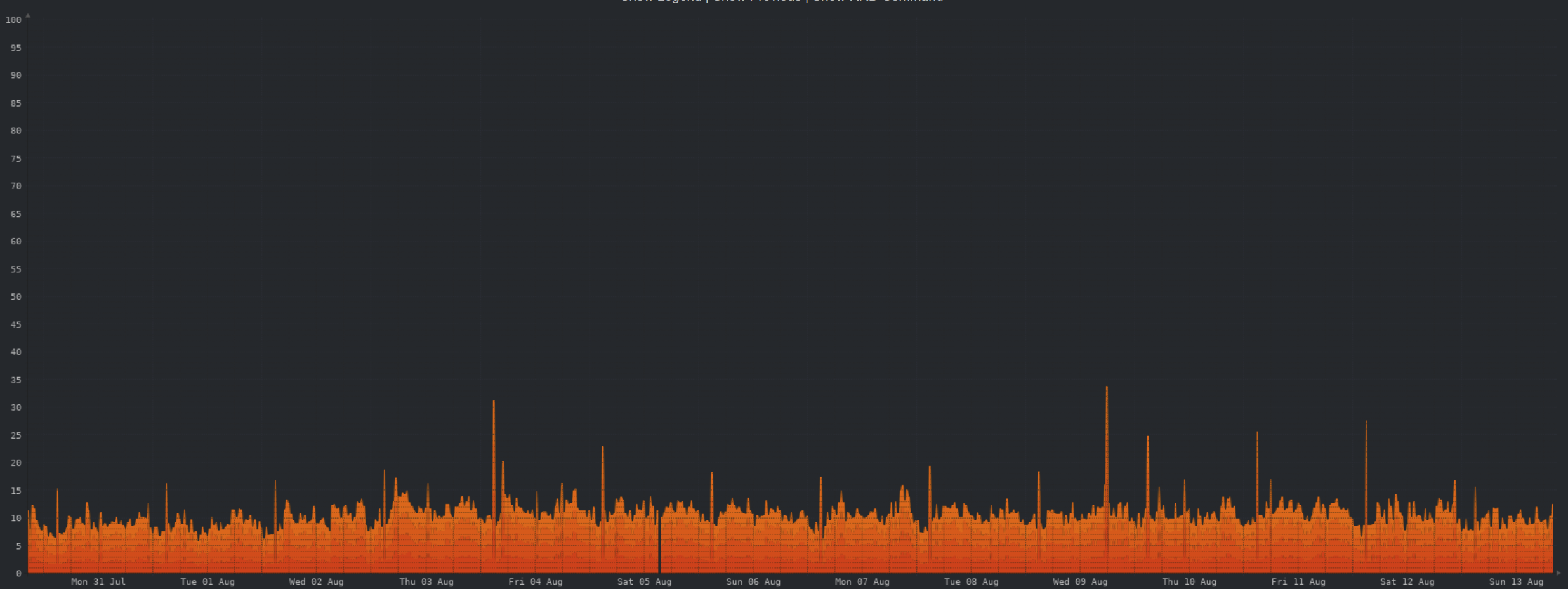

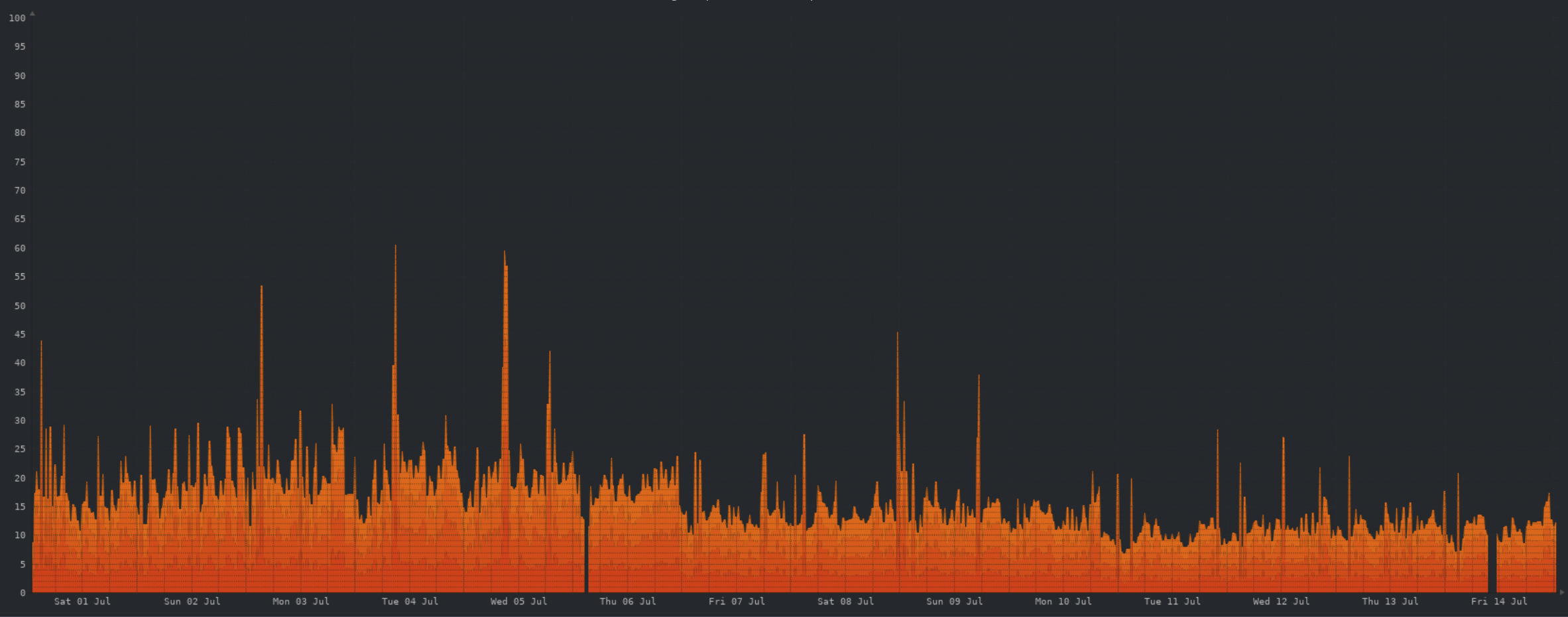

CPU:

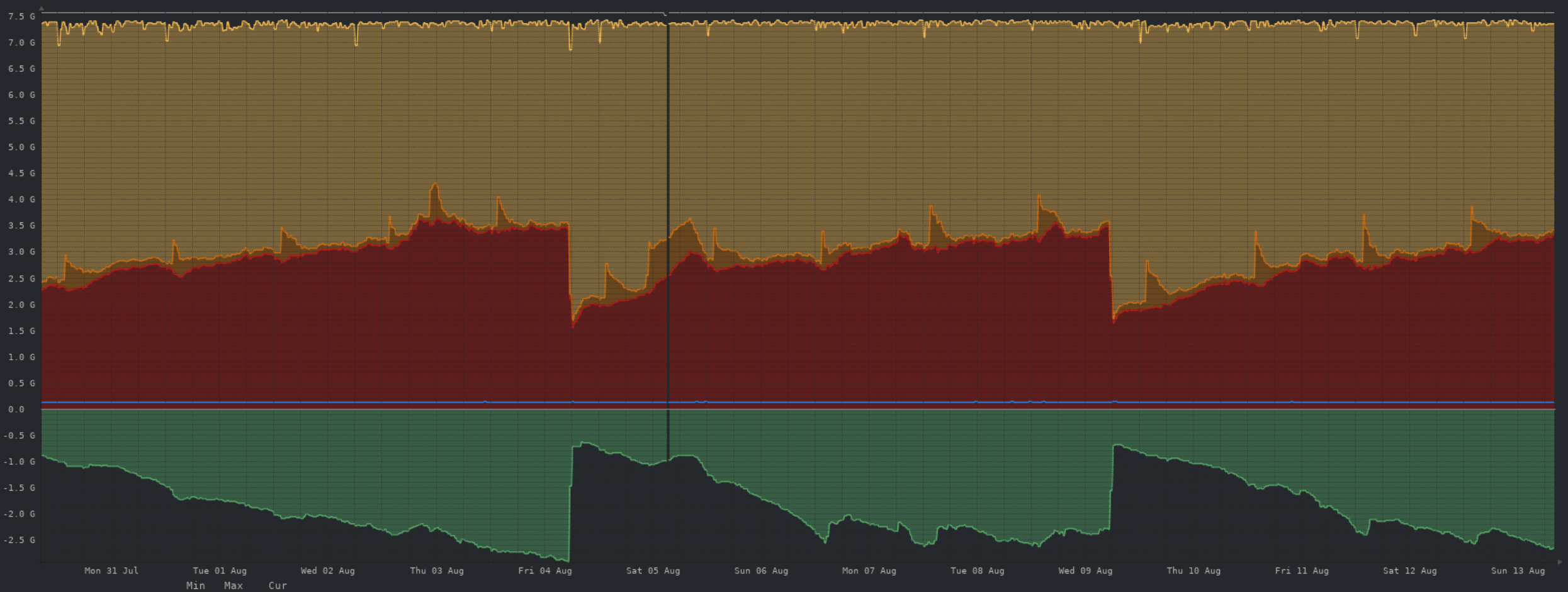

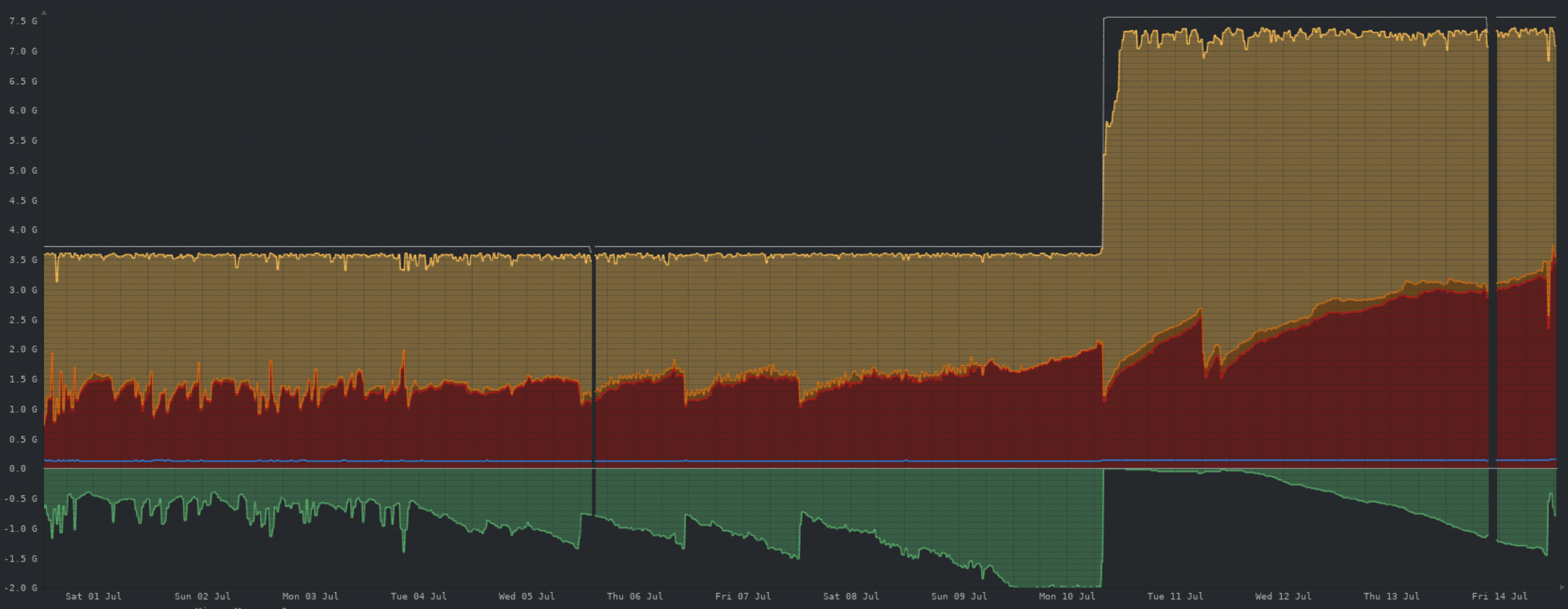

Memory:

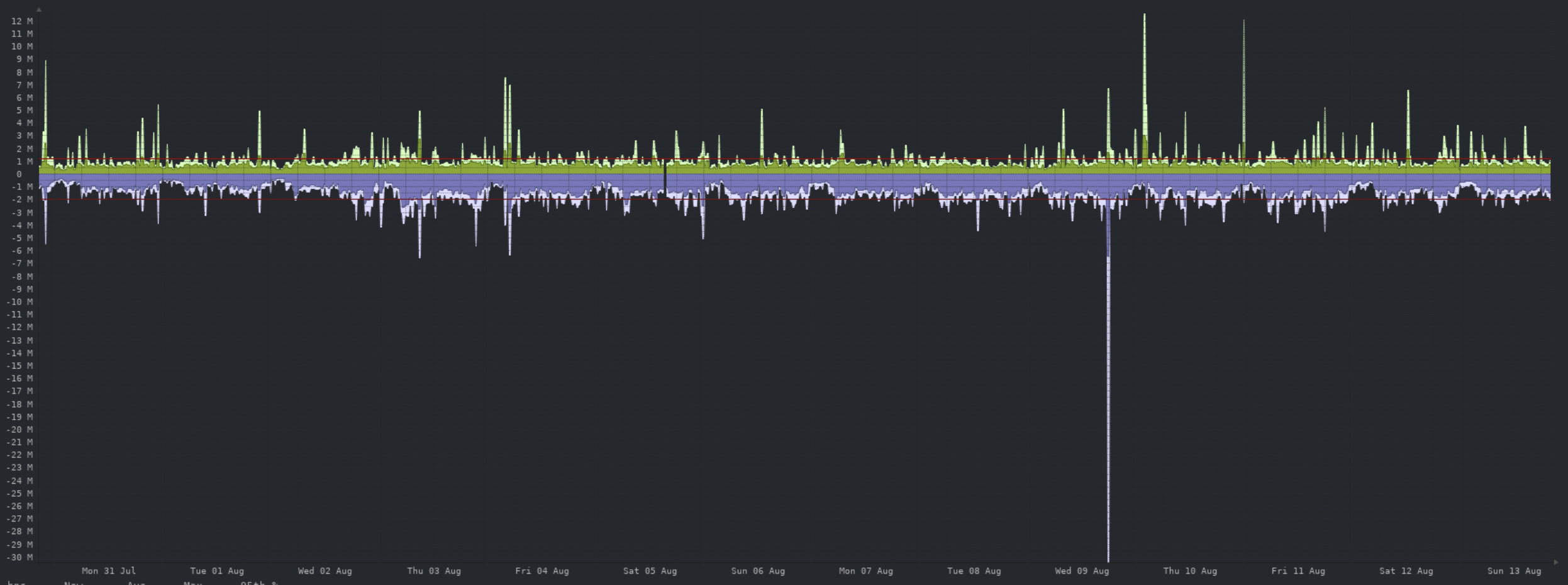

Network:

The one spike here is from a DB backup being uploaded to object storage, prior to the upgrade to 0.18.4.

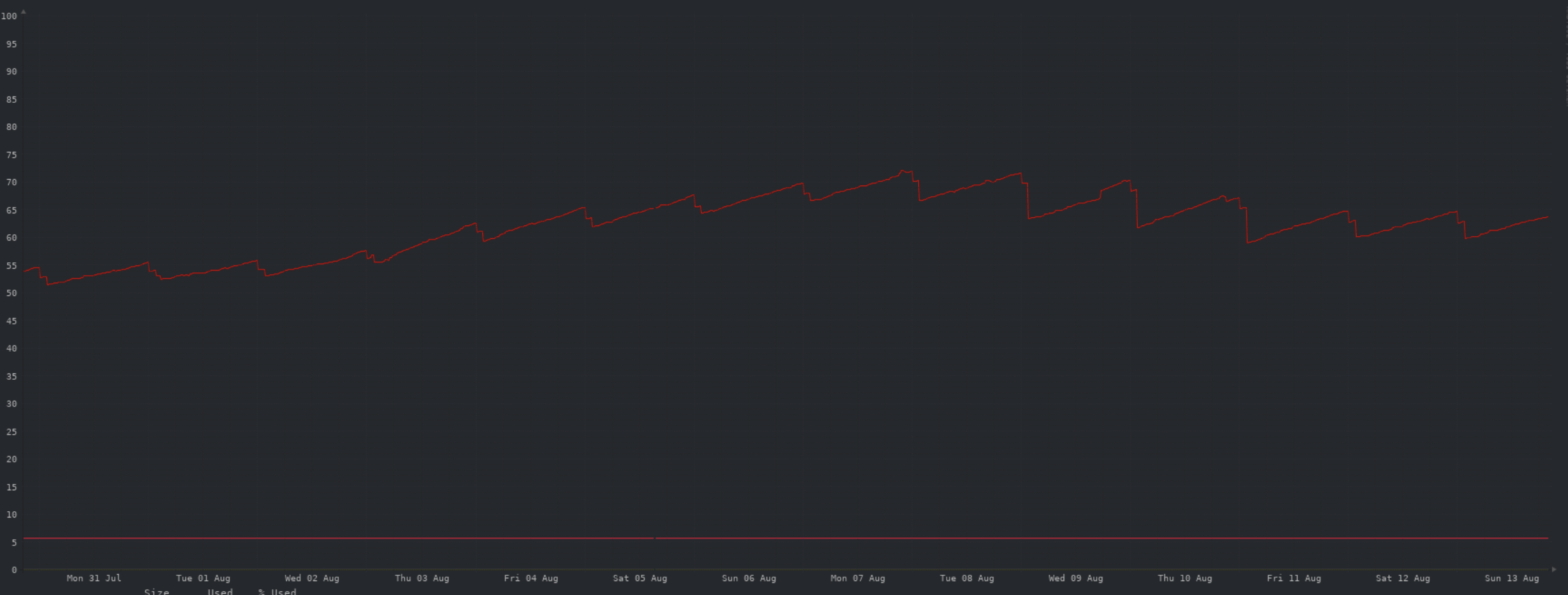

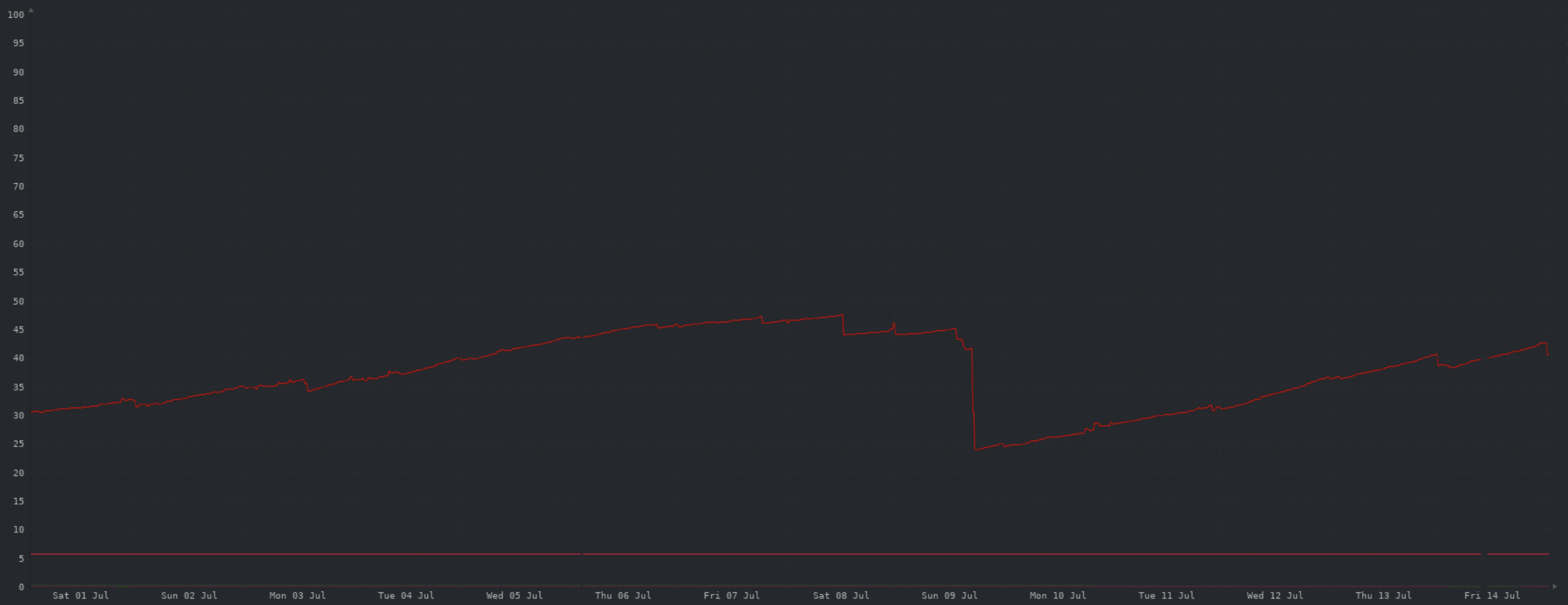

Storage:

Still ok here. You can see the daily minimum free space increase as I tweak the local cache for object storage.

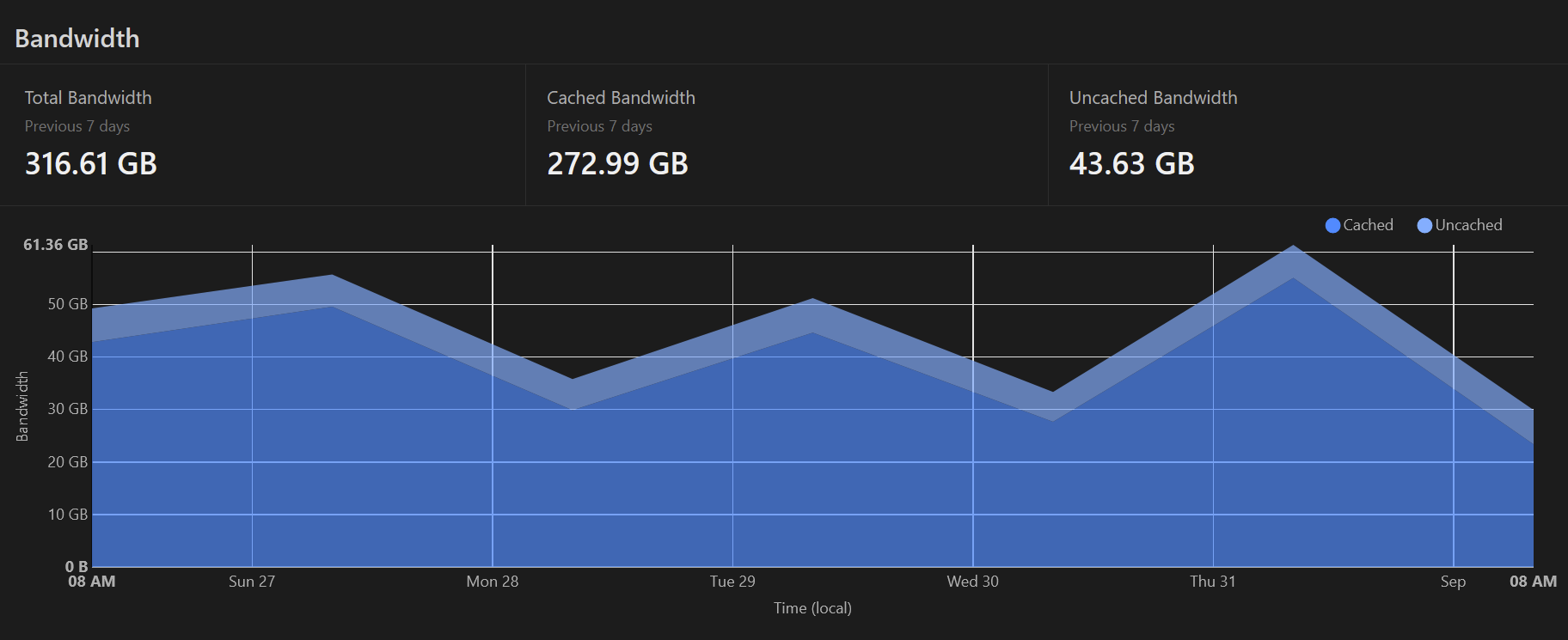

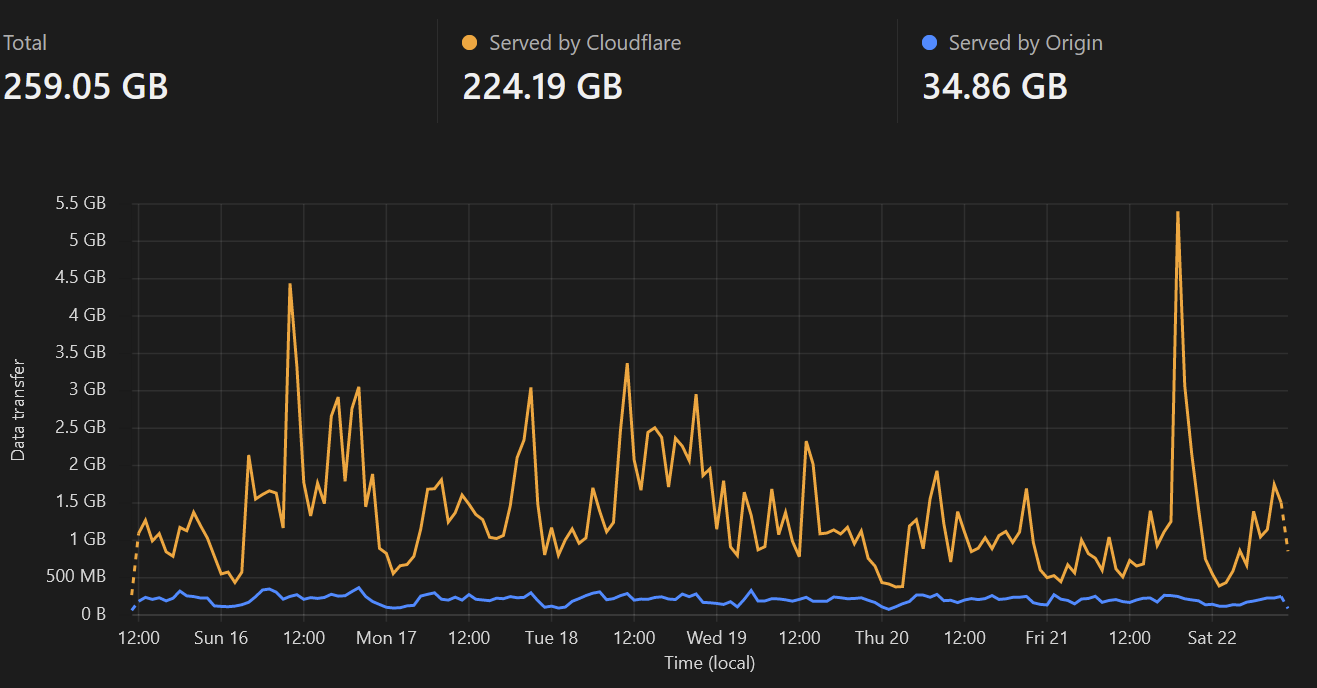

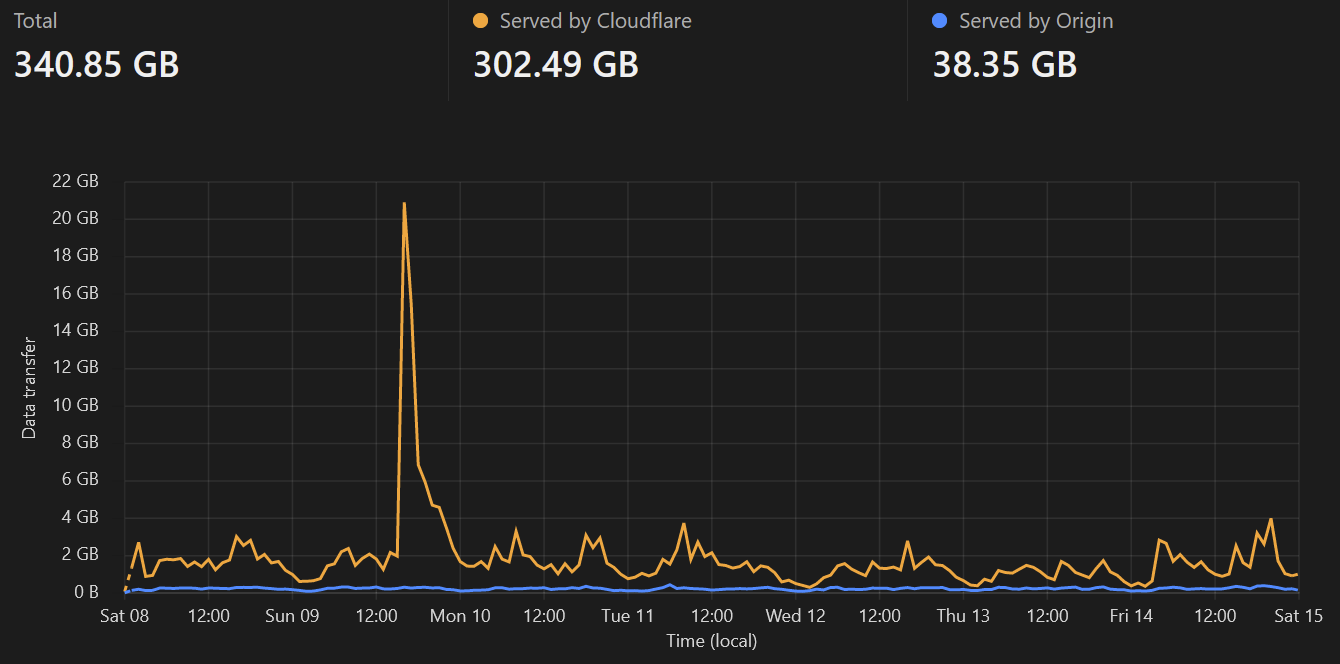

Cloudflare caching:

Summary:

Nothing of note here. Once baseline storage hits ~70% and I decreasing the object storage retention period is no longer worthwhile, I'll upgrade the server for more storage.

Financials - July 2023

Sorry this is a little late, I've been busy with real life this week.

I'm stating full dollar figures for simplicity, but they're all rounded/approximate to the dollar. I'm not an accountant and its close enough for our purposes.

Income

$267 AUD thanks to 25 generous donors, it is very much appreciated.

Expenses

$34 OVH server fees. Lower than normal, as OVH seem to switch services to calendar based rather than anniversary based from the second month. The July billing period covered 8 July - 31 July, August will be 1-31.

$40 Cloudflare. Due to huge volumes of egress traffic from Cloudflare in early July, I opted to pay for a month of premium in order to investigate. The cause was found and remediated. I've since dropped back to the free plan.

Balance

$193 surplus from July (Income - Expenses)

$293 carried forward from June

= $486 current balance

Future

Wasabi's trial period expired on the 8th of July, so the first bill is due this month.

Domain registration, I'll be looking to extend by another year and keep it so that we always have at least 12 months paid up.

Server storage, I expect we'll need to upgrade for additional storage this month. With this upgrade I'll consider pre-paying/committing to the server for a longer period of time. This provides both a discount, and certainty to everyone that the money is put to good use.

THANK YOU

..again, to our generous donors. The offer of an @aussie.zone email address redirect is still open to any donors.

As always, if you have any questions please ask.

Nerd update 5/8/23

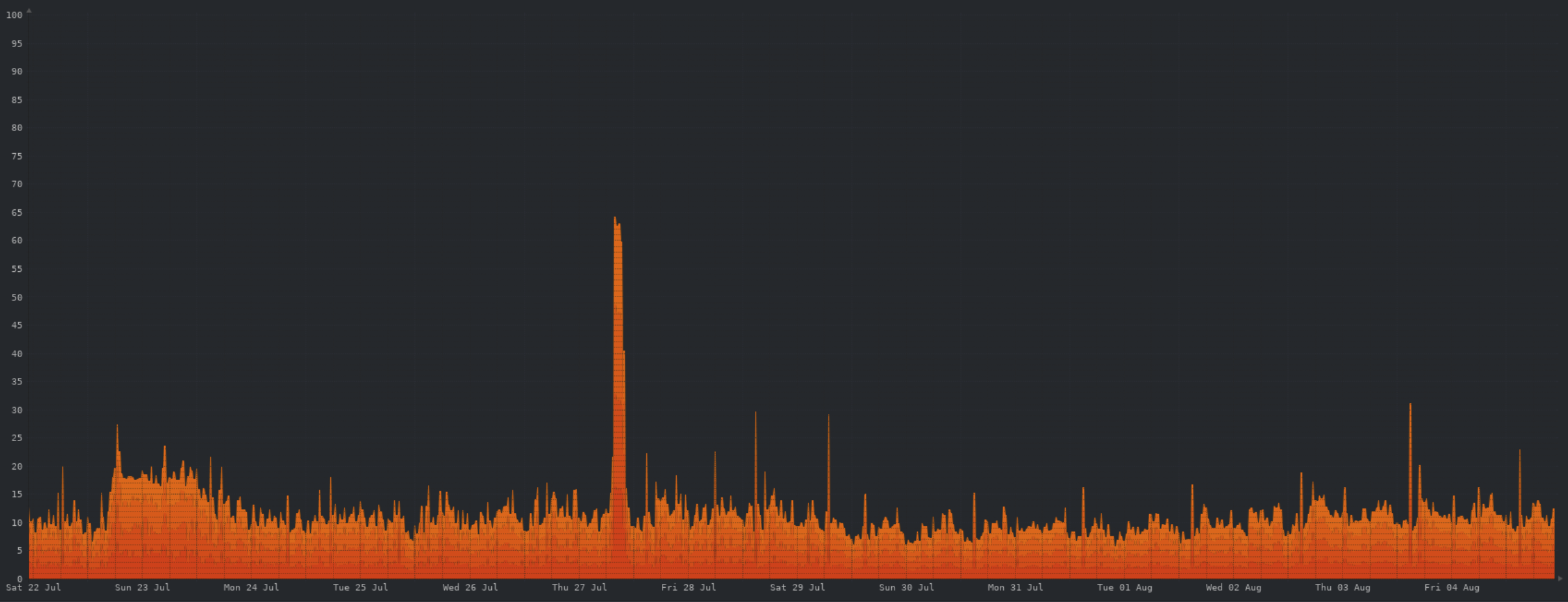

CPU:

Memory:

Network:

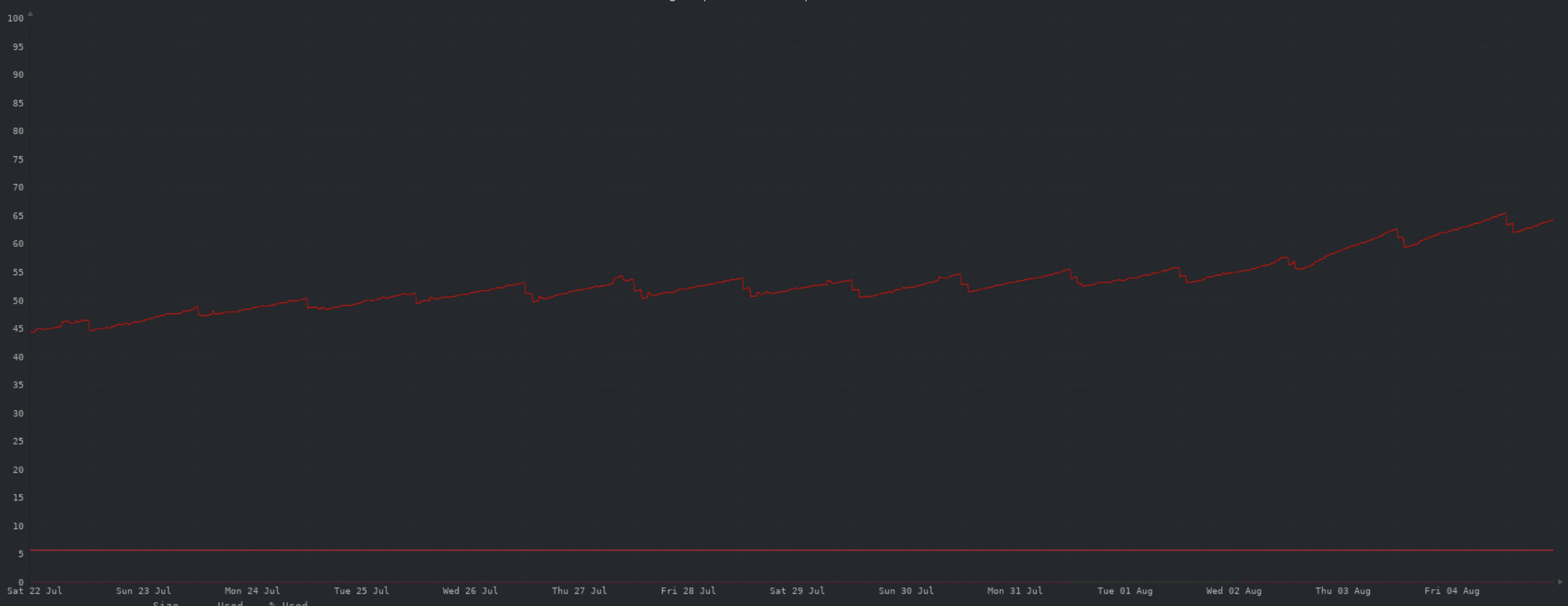

Storage:

Cloudflare caching:

Summary:

My only call out this week is an uptick in storage consumption, seems to align with an increase in new user signups and general higher activity. I'm guessing this is due to the release of Sync for Lemmy.

Nerd update 29/7/23

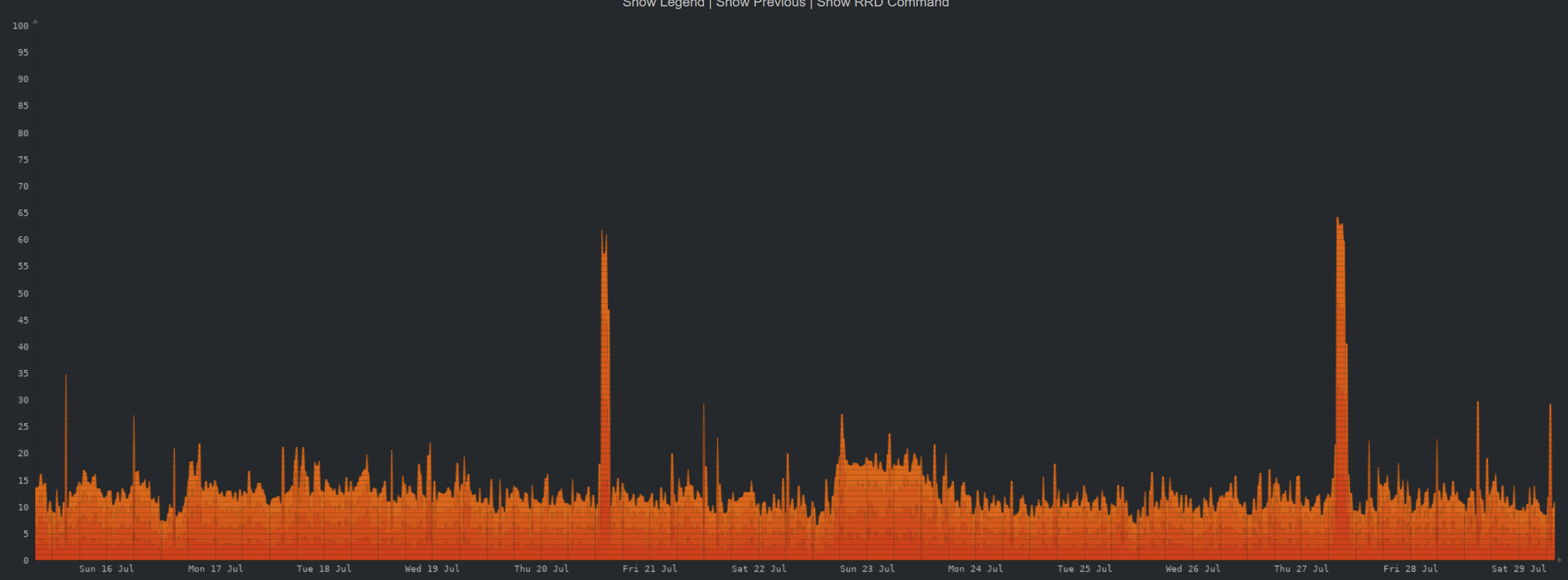

CPU:

Memory:

Network:

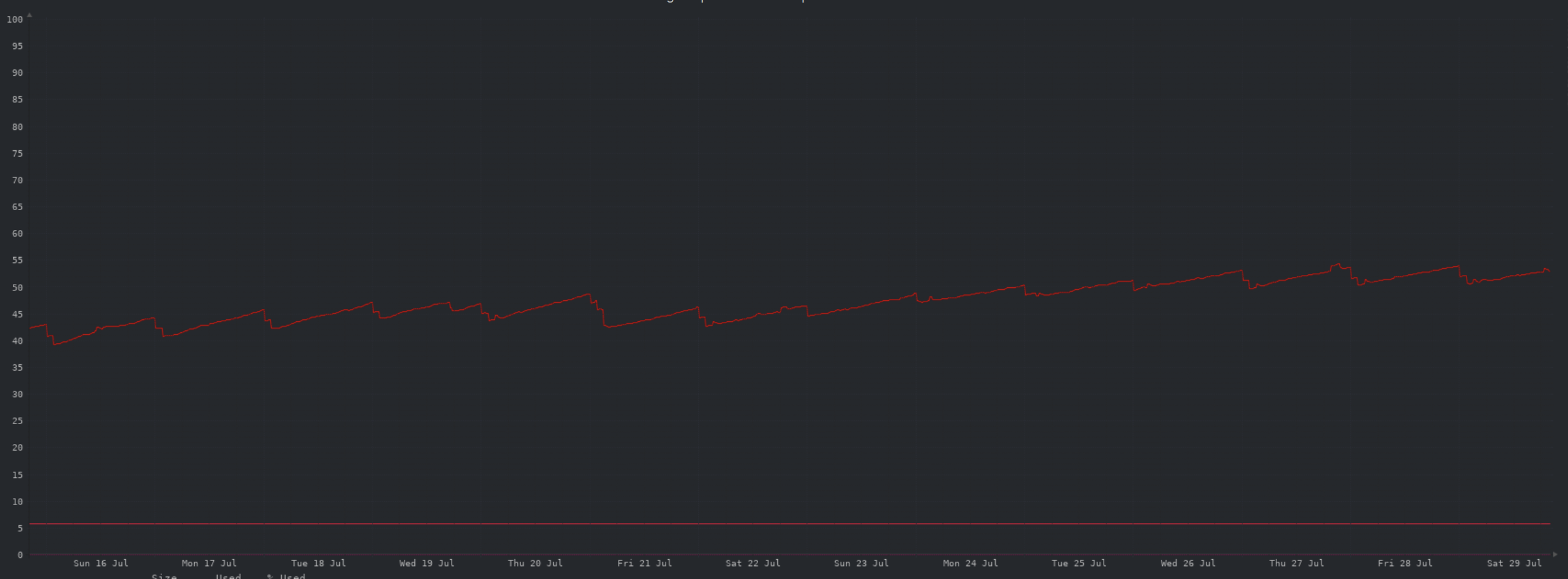

Storage:

Cloudflare caching:

Summary:

All resource usage looking stable. Storage is the only one that is trending up, as expected.

Nerd update 22/7/23

Another week... another bunch of nerd graphs!

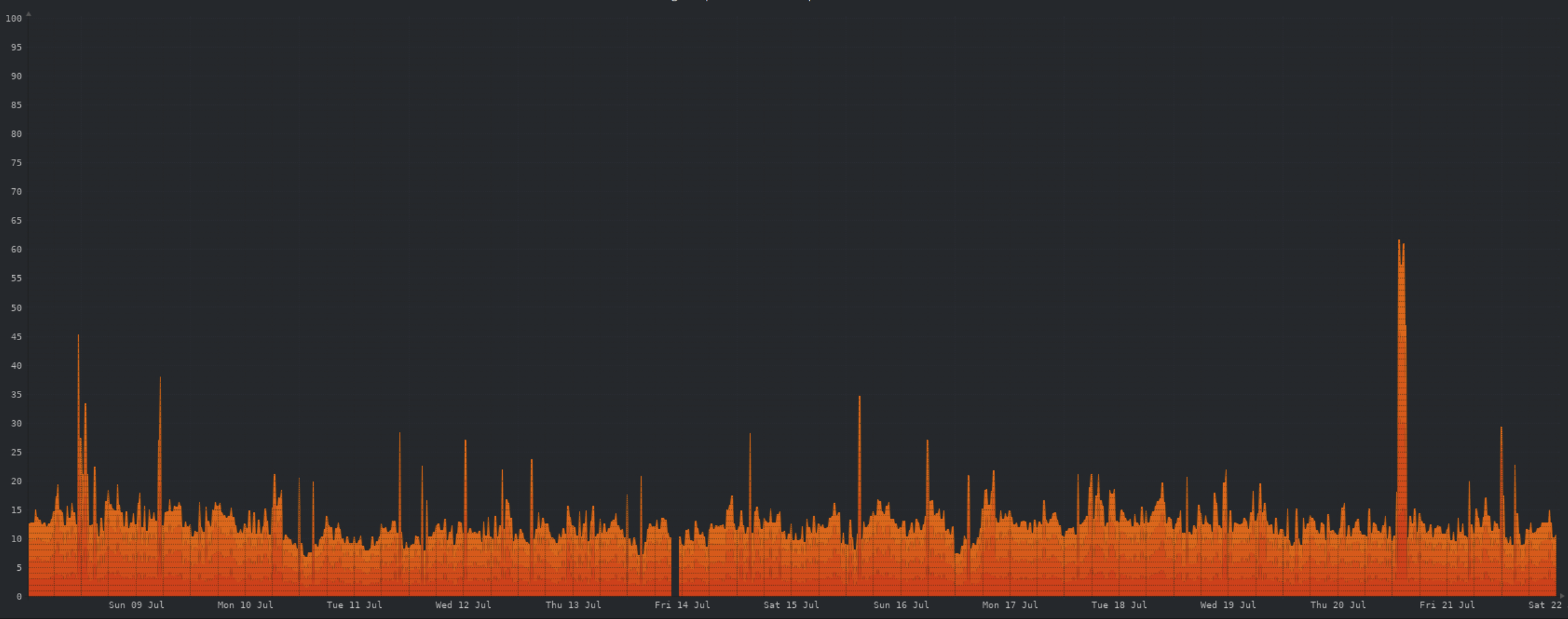

CPU:

Not much to say here, pretty stable CPU usage wise.

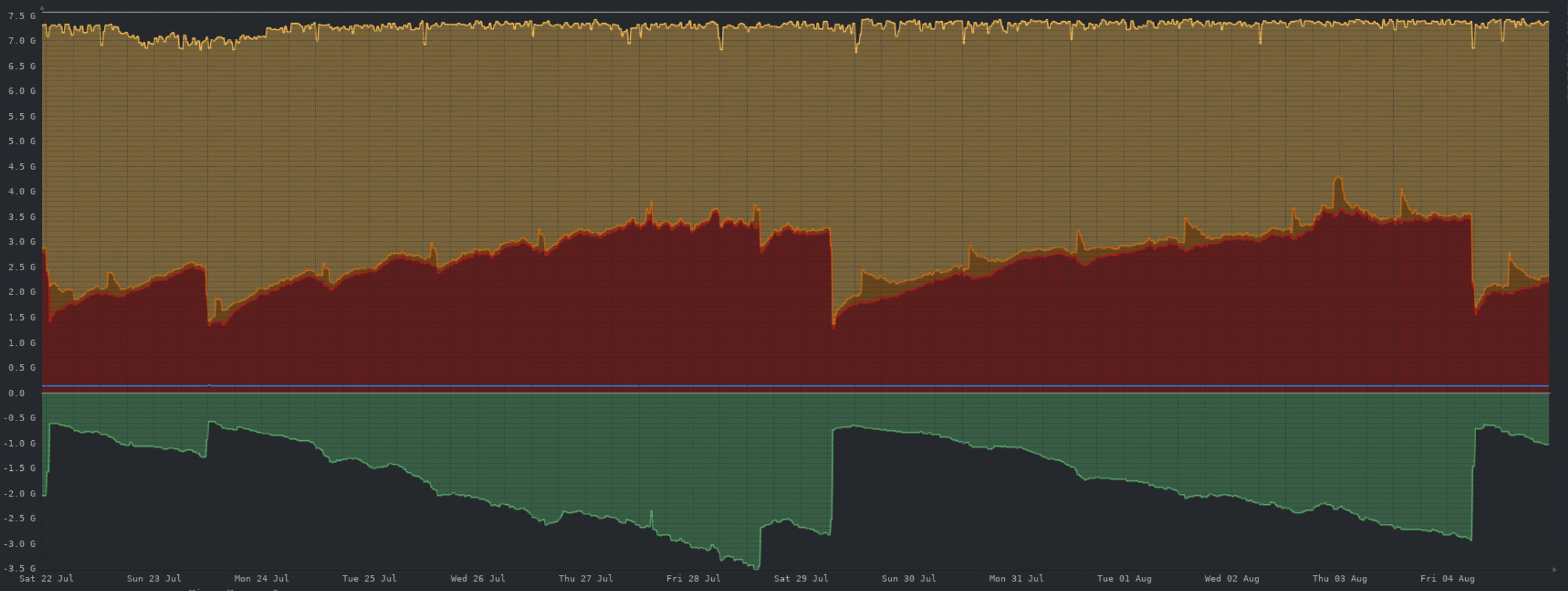

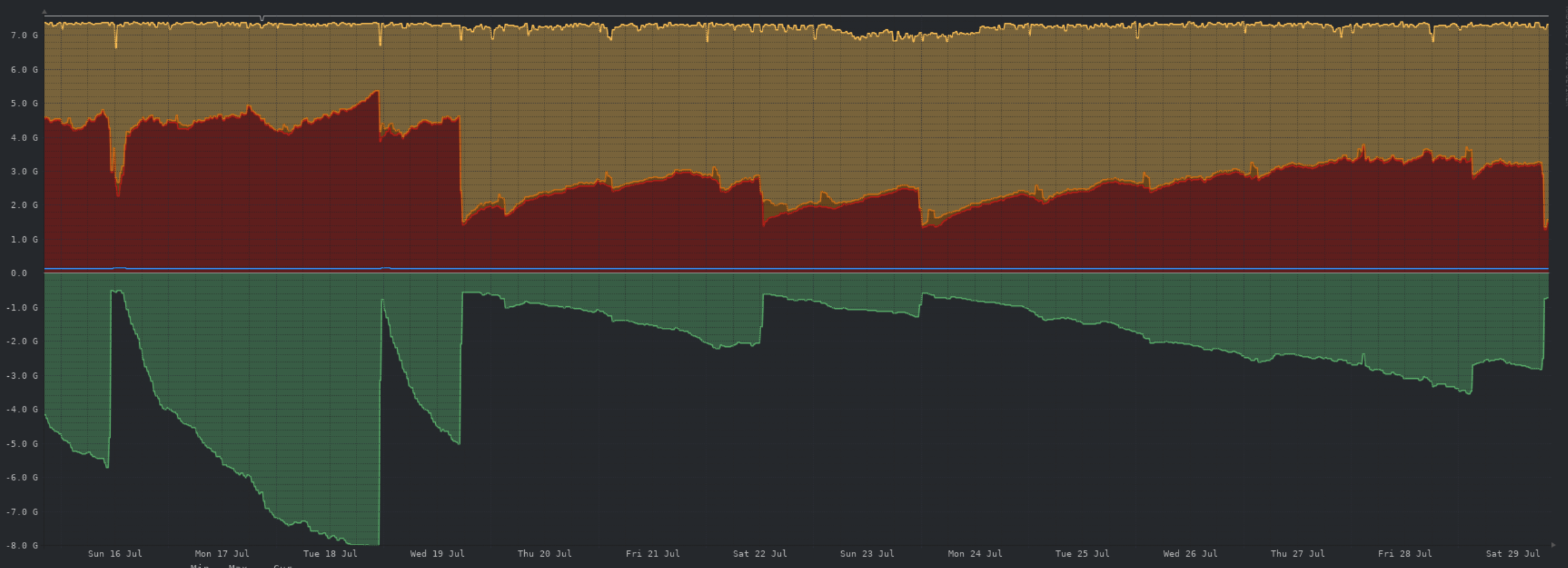

Memory:

The unusual memory growth appears to have been related to a minor Postgres configuration change I made last week, which was reverted on Thursday. Memory usage looking much more normal since.

Network:

As with CPU usage, network traffic is looking stable.

Storage:

Storage growth has normalized, now that we've hit an equilibrium point. Though I'll be tweaking the object storage cache retention to minimise object storage pulls.

Cloudflare caching:

Still saving us a large volume of egress traffic. Will save even more if particular content goes viral.

Summary:

Resource utilisation on the server is looking great across the board. No skyrocketing usage as we saw initially. Storage is still looking like the first trigger for another server upgrade, but as it is now a gradual increase we'll have plenty of fore warning and its looking like this will be some time away.

Questions? 🤓

Nerd update 15/7/23

Doh! Forgot earlier in the night, so here you are... technically Saturday.

CPU:

The lemmy devs have made some major strides in improving performance recently, as you can see by the overall reduced CPU load.

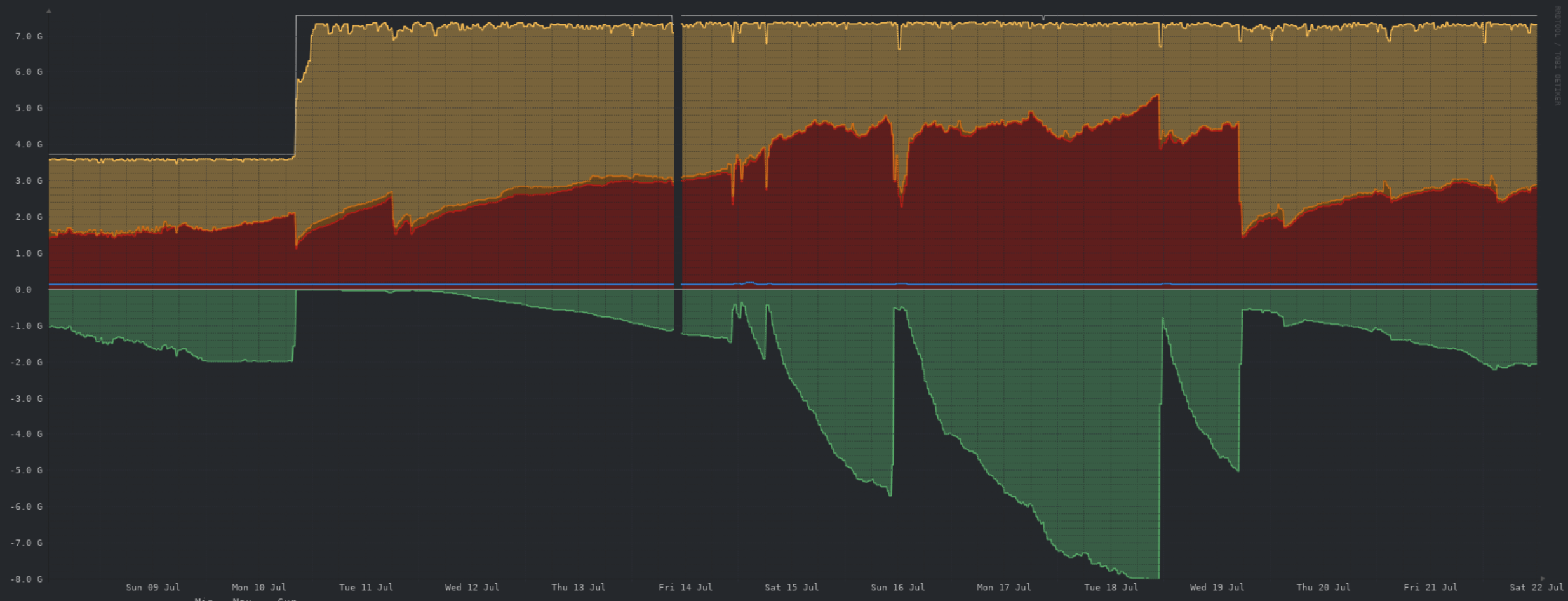

Memory:

I need to figure out why swap is continuing to be used, when there is cache/buffer available to be used. But as you can see, the upgrade to 8GB of RAM is being put to good use.

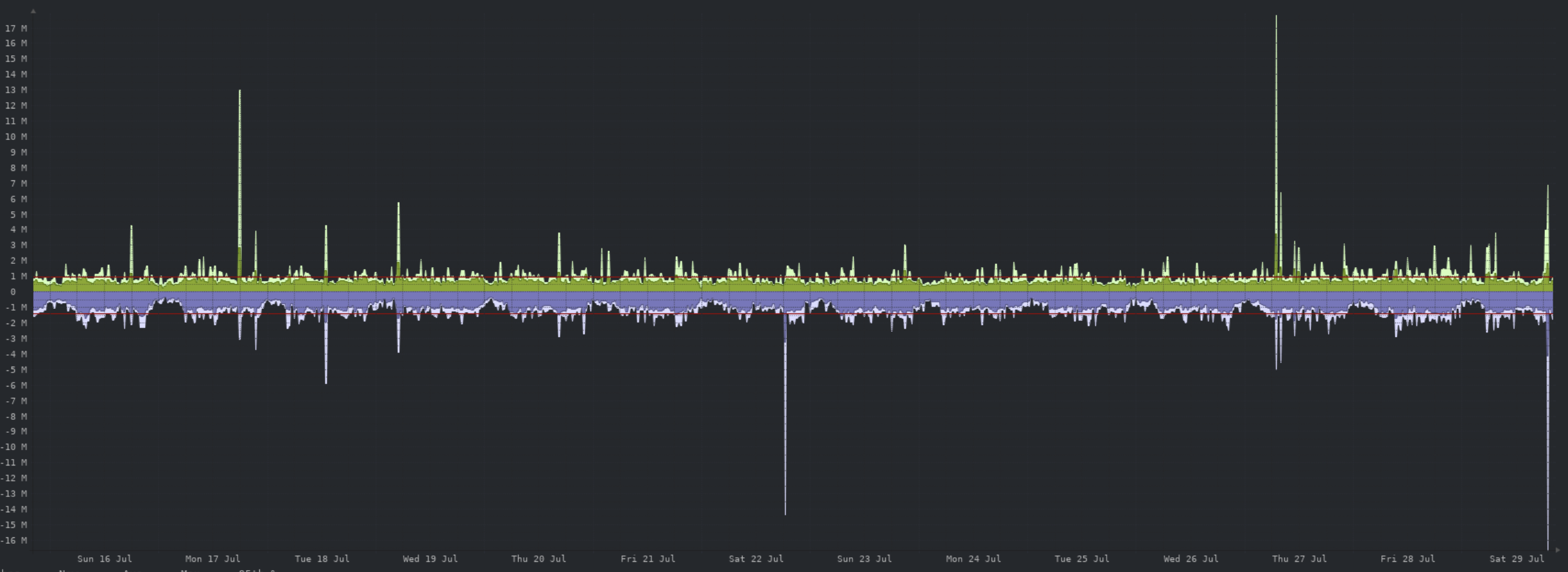

Network:

The two large spikes here are from some backups being uploaded to object storage. Apart from that, traffic levels are fine.

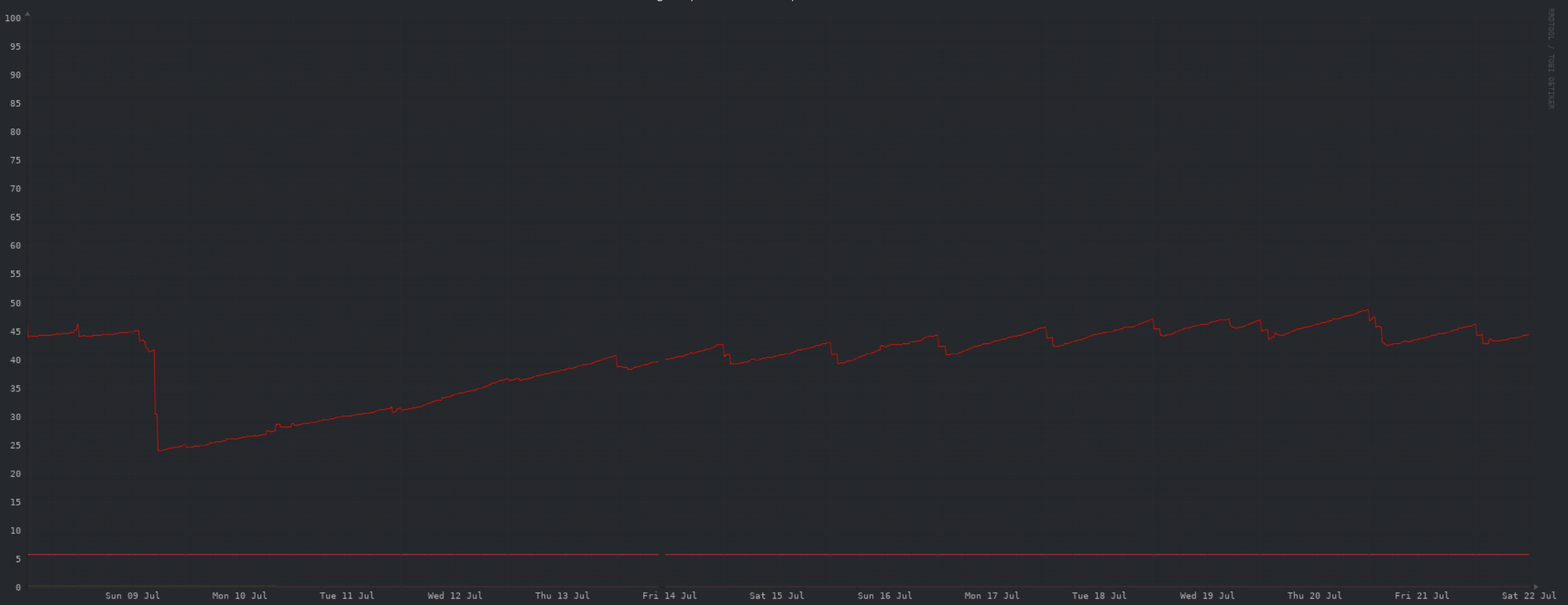

Storage:

A HUGE win here this week, turns out a huge portion of the database is data we don't need, and can be safely deleted pretty much any time. The large drop in storage on the 9th was from me manually deleting all but the most recent ~100k rows in the guilty table. Devs are aware of this issue, and are actively working on making DB storage more efficient. While a better fix is being worked on, I have a cronjob running every hour to delete all but the most recent 200k rows.

Cloudflare caching:

Cloudflare still saving us substantial egress traffic from the VPS, though no 14MB "icons" being grabbed thousands of times this week 😀

Summary:

All things considered, we're in a much better place today than a week ago. Storage is much less of a concern, and all other server resources are doing well... though I need to investigate swap usage.

Longer term it still looks as though storage will become the trigger for further upgrades. However storage growth will be much more slow and under our control. The recent upward trend is predominantly from locally cached images from object storage, which can be deleted at any time as required.

As usual, feel free to ask questions.

Avatars?

Is it possible for Voyager to display user avatars in the same way as the regular web page? Its how I recognize regulars in my communities, more so than by name.